Differential Equations Copyright © 2024 by Amir Tavangar is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License, except where otherwise noted.

eCampus Ontario

Differential Equations Copyright © 2024 by Amir Tavangar is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License, except where otherwise noted.

1

This open-access textbook is designed to make the study of Differential Equations accessible and engaging for everyone. Differential Equations is a resource primarily intended for engineering students, but it’s versatile and beneficial for learners from any discipline. It serves as a comprehensive tool, whether you’re approaching differential equations for the first time or revisiting the topic for a refresher. Instead of delving into theorem proofs or formula derivations, the focus is on offering a step-by-step guide for solving differential equations.

Each chapter in this resource introduces essential concepts and provides illustrative examples with detailed solutions. Following these examples, there are ‘Try An Example’ questions to evaluate your comprehension. These questions are generated dynamically through MyOpenMath, enabling the generation of similar questions and providing immediate feedback to aid your learning process.

Incorporating interactive elements such as videos, dynamic problems, and graphs, the textbook is optimized for web viewing through Pressbook. This enables full interaction with its multimedia content, from watching instructional videos to engaging with dynamic graphs and problem sets. While a downloadable PDF version is available, it does not include the interactive features found in the web format.

This project has received support and funding from the Government of Ontario and eCampusOntario. The views expressed in this publication are the views of the author(s) and do not necessarily reflect those of the Government of Ontario.

![]()

2

I am grateful for the support and contributions that have made this resource possible. First and foremost, my thanks go to the Government of Ontario and eCampusOntario, whose funding and belief in the importance of accessible educational resources have been instrumental in bringing this project to fruition.

I am profoundly grateful to Dr. Mohammad Reza Peyghami for his expertise and thoughtful feedback, which have been instrumental in shaping this resource.

Special thanks are also due to two remarkable Engineering students, Jazel Paco and Minh Khanh Truong, for their keen insights and diligent review, which have significantly enhanced the quality and accuracy of this work.

I extend my gratitude to the myriad of scientists, mathematicians, and educators whose foundational work underpins the concepts and methods presented in this book. While citing all their contributions individually within the text is impractical, their collective efforts have been indispensable. The References section lists key resources that have been instrumental in shaping the content of this book.

3

The web version of this textbook is fully compliant with the Accessibility for Ontarians with Disabilities Act (AODA) requirements and adheres to the Web Content Accessibility Guidelines (WCAG) 2.0, Level AA standards. Furthermore, it aligns with the comprehensive checklist provided in Appendix A: Checklist for Accessibility of the Accessibility Toolkit – 2nd Edition, ensuring it meets the highest standards of accessibility.

Designed with interactivity at its core, the textbook incorporates videos, dynamic problems, graphs, and simulations, making it ideally suited for online learning through Pressbook. Key accessibility features have been integrated into the web version to accommodate diverse learning needs:

This holistic approach to accessibility ensures that all learners, regardless of their physical abilities, can effectively engage with and benefit from the rich educational content provided in this textbook.

I

This chapter provides an overview of fundamental concepts in differential equations along with an introduction to direction fields for first-order differential equations.

1.1 Introduction: This section covers basic definitions concerning differential equations, including their order, various classifications, and the nature of their solutions.

1.2 Direction Fields: This section briefly introduces direction fields, a tool for visually representing the behavior of solutions to first-order differential equations without needing an exact solution formula.

Émilie du Châtelet, born in Paris in 1706, was a woman of exceptional intellect and determination who carved her unique path in the male-dominated world of science and mathematics during the Enlightenment. Despite societal norms restricting women’s access to formal education, Du Châtelet educated herself in mathematics and physics, often through creative means such as disguising herself as a man to attend lectures. Her most significant work, a translation and commentary on Isaac Newton’s ‘Principia Mathematica’, remains the standard French translation to this day. In it, she clarified Newton’s ideas and expanded on them, particularly in her elucidation of the principle of conservation of energy. Émilie du Châtelet’s work laid the groundwork for future developments in physics and mathematics, including those in differential equations. Her tenacity and brilliance broke through the constraints of her time, paving the way for future generations of women in science, and her legacy continues to inspire and challenge norms in the scientific community.

Differential equations (DEs) are mathematical equations that describe the relationship between a function and its derivatives, either ordinary derivatives or partial derivatives. In its simplest form, it describes the rate at which a quantity changes in terms of the quantity itself and its derivatives. Differential equations are powerful tools in mathematics and science as they enable the modeling of a wide range of real-world phenomena across various disciplines, including physics, engineering, biology, economics, and many others. Here are a few examples of differential equations.

The order of a differential equation is the order of the highest derivative that appears in the equation. For example, if the highest derivative is a second derivative, the equation is of second order. Here are a few examples:

The order of a differential equation often determines the methods used to solve it. The order of a differential equation is independent of the type of derivatives involved, whether they are ordinary or partial derivatives.

Throughout this book, our focus will primarily be on first- and second-order differential equations. As you’ll discover, the methods used to solve second-order differential equations can often be easily extended to tackle higher-order equations.

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=5

An Ordinary Differential Equation (ODE) is a differential equation involving a function of one independent variable and its derivatives. All the above examples except the heat equation are ordinary differential equations.

A Partial Differential Equation (PDE) is a differential equation that contains unknown multivariable functions and their partial derivatives. PDEs are used to formulate problems involving functions of several variables.

In this textbook, our primary focus will be on ordinary differential equations, which involve functions of a single variable. We will only delve into partial differential equations in the final chapter.

A linear differential equation is one in which the dependent variable

where is the dependent variable, is the independent variable, are functions of (which can be constants or zeros), and is a function of .

A nonlinear differential equation is one in which the dependent variable or its derivatives appear to a power greater than one, or they are multiplied together, or in any way that does not fit the linear form. For example,

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=5

A differential equation is termed homogeneous if every term in the equation is a function of the dependent variable and its derivatives. For linear differential equations, an equation is homogeneous if the function

For example, the linear equation

A differential equation is nonhomogeneous if it includes terms that are not solely functions of the dependent variable and its derivatives. For linear equations, this typically means there is a non-zero function on the right-hand side of the equation. For example, the linear equation

A solution of a differential equation is a function that satisfies the equation on some open interval. This means that when the function and its derivatives are plugged into the differential equation, the equation holds true for all values within the interval. Often there are a set of solutions.

Verify

First, we find

By substituting

which is equal to the right-hand side of the equation. Since the given

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=5

Now, consider the differential equation

An explicit solution explicitly expresses the dependent variable in terms of the independent variable(s). For example,

Initial condition(s) refer to the values specified for the dependent variable and possibly its derivatives at a specific point. Initial conditions are used to determine the specific (or particular) solution of a differential equation from the general solution, which typically contains arbitrary constants. For example,

An Initial Value Problem (IVP) is a differential equation with initial condition(s) that nails down one particular solution. A solution might not be valid for all real numbers – there is the “interval of validity” or the domain of the solution.

Although having an explicit formula for the solution of a differential equation is useful for understanding the nature of the solution, determining where it increases or decreases, and identifying its maximum or minimum values, finding such a formula is often impossible for most real-world differential equations. Consequently, alternative methods are employed to gain insights into these questions. One effective approach for visualizing the solution of a first-order differential equation is to create a direction field for the equation. This method provides a graphical representation of the solution’s behavior without requiring an explicit formula.

We assume that the first-order differential equation

For the equation

The general solution of the equation is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=89

Figure 1.2.1 Direction field for and solutions to

The arrows in the direction fields represent tangents to the actual solutions of the differential equations. We can use these arrows as guides to sketch the graphs of the solutions to the differential equation, providing a visual representation of how the solutions behave. By following these arrows, we can visually trace the trajectory of a solution over time, which can indicate its long-term behavior.

II

This chapter delves into first-order differential equations, vital in science and engineering for modeling rates of change in numerous phenomena. It covers their structure, solution techniques, and real-world applications in fields like population dynamics, thermal processes, and electrical circuits.

2.1 Separable First-Order Differential Equations: This section addresses separable differential equations, a category of first-order equations where each variable can be separated on different sides of the equation.

2.2 Linear First-Order Differential Equations: This section covers the solution to first-order nonhomogeneous linear equations.

2.3 Exact Differential Equations: This part explains the criteria for an equation to be exact and outlines methods for solving these equations.

2.4 Integrating Factors: This section explores the techniques of utilizing integrating factors to transform a non-exact equation into an exact equation that can be solved.

2.5 Applications of First-Order Differential Equations: The final section illustrates the use of first-order differential equations in modeling growth and decay, substance mixing, temperature changes, motion under gravity, and circuit behaviors.

Mary Cartwright, born in 1900 in Aynho, Northamptonshire, England, emerged as a pioneering mathematician in an era when female academics were a rarity. Her journey in mathematics began at Oxford University, leading her to Cambridge, where she initially focused on classical analysis. However, it was during World War II, while investigating the problem of radio waves and their interference patterns, that Cartwright made a groundbreaking discovery. Collaborating with J.E. Littlewood, she delved into nonlinear differential equations, and their work laid the foundational stones for what would later be known as chaos theory. Cartwright’s foray into this field produced seminal results, including the Cartwright-Littlewood theorem and her study of the Van der Pol oscillator, a concept critical in the understanding of oscillatory systems. Her extraordinary contributions not only advanced the field of mathematics but also broke gender barriers, setting a precedent for women in STEM. Mary Cartwright’s life was a blend of intellectual rigor and quiet resilience, inspiring a legacy that continues to encourage mathematicians, especially women, to explore and reshape the boundaries of mathematical knowledge.

Separable equations are a type of first-order differential equations that can be rearranged so all terms involving one variable are on one side of the equation and all terms involving the other variable are on the opposite side. This characteristic makes them easier to solve compared to other types of differential equations. Often, these equations represent nonlinear relationships.

Understanding and applying integration techniques is crucial for solving separable equations. Therefore, reviewing and familiarizing yourself with standard integration methods is recommended before attempting to solve these equations.

A first-order differential equation is called separable if it can be written in the form of

where

For example, the equation

To solve the equation

1. Separate variables: multiply both sides by

2. Integrate both sides:

3. Solve for

Solve the nonlinear equation

1. Multiplying both sides by

2. Integrating both sides, we get

3. Multiplying by 3 and taking the cubic root of both sides, we obtain

By substituting constant

Solve the differential equation

This is a separable differential equation as it can be expressed in the form

1. Multiplying both sides by

2. Integrating both sides, we get

3. Exponentiating both sides yields

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=138

When solving nonlinear differential equations using the separable method, it is crucial to consider the interval of validity, which is the range of the independent variable, typically

Additionally, due to the nature of nonlinear equations, certain initial conditions might lead to no solution or multiple solutions, emphasizing the need to carefully select and verify the range of

Solve the initial value problem

Find the general solution:

After factoring out

1. Multiplying both sides by

2. Integrating both sides, we get

3. Multiplying by 7 and exponentiating both sides, we obtain

By rearranging the equation and substituting

Applying the initial condition:

The solution to the IVP problem is then

There is no restriction on the domain of

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=138

Solve the initial value problem and find the interval of validity of the solution.

Find the general solution:

This is a separable differential equation as it can be expressed in the form

1. Multiplying both sides by

2. Integrating both sides, we get

3. Multiplying by -1 and taking the reciprocal of both sides, we obtain the explicit solution

Applying the initial condition:

The solution to the IVP problem is then

Find the interval of validity:

To establish the interval of validity for the solution, we need to consider two constraints:

).

). , it implies that

, it implies that  .

.The interval of validity is the range of

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=138

explicitly as a function of

explicitly as a function of  .

.

Interval of validity:

A first-order differential equation is classified as linear if it can be written as

A first-order differential equation that cannot be expressed in that form is called nonlinear. If

Some equations may not appear to be linear at first, such as

Theorem: If

In our discussions within this text, we will not always explicitly mention the interval when seeking the general solution of a specific linear first-order equation. By default, this implies that we are looking for the general solution on every open interval where the functions and in the equation are continuous.

To solve Equation 2.2.1, we start by assuming that the solution can be expressed as , where is a known solution to the corresponding homogeneous equation (called complementary equation), and

By simplifying and rearranging, we obtain

Since is a solution to the complementary equation, , simplifying the expression to . Integrating both sides allows us to determine

Now that we understand the derivation of the solution, let’s outline the solution process in the following steps.

1. Write the equation in the standard form.

2. Calculate the integrating factor letting the constant of integration be zero for convenience.

3. Integrate the right-hand side equation and simplify where possible. Ensure you properly deal with the constant of integration.

Occasionally, the function

Find the general solution to

1. First, we multiply by

So

2. Thus, the integrating factor is

3. Substituting into the general formula, we obtain

Figure 2.2.1 depicts the sketches of the solutions for various values of constant

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=144

Figure 2.2.1 Graph of

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=144

Theorem – Existence and Uniqueness of solution: If

a) The general solution to the nonhomogeneous equation is

b) If

Solve the initial problem

Find the general solution:

1. First, we rearrange the equation to put it in the standard form:

Therefore,

2. The integrating factor is

3. Substituting into the general formula for the solution, we obtain

Apply the initial condition to find C:

The solution to the IVP problem then is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=144

of equation

of equation  .

.

with the initial condition

with the initial condition

Exact differential equations are a class of first-order differential equations that can be solved using a particular integrability condition. This section will discuss what makes an equation exact, how to verify this condition, and the methodology for solving such equations.

We begin by introducing a foundational theorem followed by an illustrative example to demonstrate its application. Following that, we delve into the concept of exact equations and explore a method for solving them.

Theorem: If function

The theorem can be proven by using implicit differentiation.

Show that

To apply the theorem effectively, we need to define

letting

We observe that

We now shift our focus to a broader understanding of exact differential equations. Consider a differential equation expressed as

which can also be represented as

An equation of this form is called exact if there is a function

For instance, the equations

Now the pertinent questions are

and thus a solution?

and thus a solution? with partial derivatives

with partial derivatives  and

and  that match

that match  and

and  , respectively. If

, respectively. If  and its partial derivatives

and its partial derivatives  and

and  are continuous, then the cross partial derivatives of

are continuous, then the cross partial derivatives of  must be equal:

must be equal:

or equivalently,

This relationship is summarized in the theorem below.

Theorem. Consider that the first derivatives of

is exact in

To address the second question of solving an exact differential equation, follow the step-by-step procedure outlined below.

1*. Find

2. Determine the Arbitrary Function:

a. To find

b. After isolating

3. Form the general Solution: The solution to

where

*Note: As an alternative method, you might also start by integrating

Determine if the equation is exact and if so find the solution:

1) Test for Exactness:

Since

2) Find the solution:

1. We know

2a. To find

Since

By comparing, we determine that

2b. By integrating

3. Thus, an implicit solution to the differential equation is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=146

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=146

a) Solve the initial value problem and find the explicit solution

a)

1) Test for Exactness:

Since

2) Find the general solution:

We have the option to integrate

1.

It is important to note that we include an arbitrary function of

2a. To find

Since

2b. By integrating

3. Thus, an implicit solution to the differential equation is

Apply the initial condition:

The solution to the IVP problem then is

We need to find the explicit solution, so we rearrange the equation to solve for

b) Find the interval of validity:

To establish the interval of validity for the solution, we need to ensure the denominator of the rational function is not equal to zero to avoid undefined expressions:

Therefore, the interval of validity for the solution is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=146

.

.  .

.  ,

,  .

.

When faced with a non-exact first-order differential equation, the method of integrating factors provides a systematic way to transform it into an exact equation that can be solved. This section explores the techniques of utilizing integrating factors for solving differential equations.

Sometimes a differential equation that is not initially exact can be transformed into an exact one by multiplying through by an appropriate function,

It is not exact because

This equation is now exact as

The function

makes it exact, then

When you encounter a first-order differential equation in the form

1. Compute partial derivatives: Compute

2. Check for exactness:

, then the equation is already exact, and no integrating factor is needed.

, then the equation is already exact, and no integrating factor is needed. , the equation is not exact, and you may proceed to find an integrating factor.

, the equation is not exact, and you may proceed to find an integrating factor.3. Find a special integrating factor:

(i). If (i) is a function of

(i). If (i) is a function of  only, then an integrating factor is given by

only, then an integrating factor is given by  .

. only, compute the expression

only, compute the expression  (ii). If (ii) is a function of

(ii). If (ii) is a function of  only, then an integrating factor is given by

only, then an integrating factor is given by  .

.4. Apply the integrating factor: Multiply the entire differential equation by the integrating factor

5. Solve the exact equation: Once the equation is made exact, solve it using the method outlined in Section 2.3 for exact equations.

Solve

A quick inspection shows that the equation is neither separable nor linear nor exact. Therefore, we check if a special integrating factor exists:

Since (i) is the function of only

Multiplying

Solving the equation using the exact method, we get the implicit solution

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=148

a)

b)

a)

b)

Mathematical modeling is the process of translating real-world problems into mathematical language. This involves formulating, developing, and rigorously testing models to represent and solve complex issues. Differential equations, including both ordinary and partial types, are instrumental in these models. They relate some function with its derivatives, representing rates of change. This makes them particularly suited to modeling dynamic systems where understanding how things evolve is crucial.

In this section, we will explore how first-order differential equations are applied across various domains, including growth and decay processes, substance mixing, Newton’s law of cooling, the dynamics of falling objects, and the analysis of electrical circuits.

One of the most common applications of first-order differential equations is in modeling population growth or decline. The models provide insights into how populations change over time due to births, deaths, immigration, and emigration. The simplest model for population growth is the Exponential Growth Model, which assumes an unlimited resource environment. It is represented by the differential equation:

where

where

If

When dealing with problems where there are different rates of population entering and exiting a region, the key is to understand that the overall rate of change of the population is the result of the difference between the rate of population entering (immigration or birth) and the rate of population leaving (emigration or death). This can be represented as a differential equation that models the net change in population over time. The general approach is to set up a balance equation reflecting these rates:

Here

A fish population in a lake grows at a rate proportional to its current size. Without outside factors, the fish population doubles in 10 days. However, each day, 5 fish migrate into the area, 16 are caught by fishermen, and 7 die of natural causes. Determine if the population will survive over time and, if not, when the population will become extinct. The initial population is 200 fish.

Before we solve this IVP, we need to find

The general solution to this separable differential equation is

Applying the initial condition, we obtain

Now, we return to the original differential equation.

This is a linear differential equation. we write it in standard form:

The integrating factor is

The general solution is

Applying the initial condition gives

Thus the specific solution is

The exponential term has a positive exponent and thus grows exponentially. However, since the coefficient of the exponential term is negative, the whole population declines and becomes extinct eventually. To determine when the population will become extinct, we set

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=150

Mixing problems involve combining substances or quantities and observing how they interact over time. This can refer to pollutants in a lake, different chemicals in a reactor, or even sugar dissolving in coffee. The common element in these scenarios is the change in concentration of substances in a mixture over time. Through differential equations, specifically first-order ones, we can model and solve these dynamic situations.

In mixing problems,

For a typical mixing problem, you might have a tank that contains a certain amount of fluid into which another substance is being mixed. The concentration of the substance in the tank changes as more of the substance is added or removed. The general first-order differential equation for such a scenario is similar to what we discussed for the population change of a region.

Here

Consider a tank holding 2000 liters of fresh water. Starting at

Given information

) is constant since water inflow and outflow are equal:

) is constant since water inflow and outflow are equal:

a) Our task is to determine the rate at which salt enters the tank (

The rate at which salt enters the tank is the product of the salt concentration of the incoming water and the water inflow rate:

The rate at which salt leaves the tank is the concentration of salt in the tank (ratio of the salt in the tank to the volume of water in the tank), multiplied by the water outflow rate. At any time, the quantity of salt in the tank is

The tank initially has pure fresh water without any salt, so

The differential equation is separable (and linear) and can be solved easily. The solution to the IVP is

This equation gives us the amount of salt in the tank

b) To determine when the concentration of salt in the tank reaches

Now, we need to solve for t when

The concentration of salt in the tank will reach 0.04 kg/L approximately at

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=150

Newton’s Law of Cooling describes the rate at which an object’s temperature changes when it is exposed to a surrounding environment with a different, constant temperature. The fundamental principle is that the rate of change of temperature (

Here,

This differential equation is separable (and linear), which has the solution

The negative sign in the exponent indicates that the temperature difference between the object and its surroundings decreases exponentially over time. This formula applies whether the object is initially hotter or cooler than the surroundings, depicting both cooling and warming processes under the law’s assumptions.

Consider a microprocessor that operates in an environment where the room temperature is constant at 25 ◦C. After a long period of operation, the microprocessor’s temperature is at 75 ◦C. Once the device is turned off, the microprocessor begins to cool down to room temperature. Suppose the characteristic cooling constant

Given information:

a) Plugging the given values into the solution to Newton’s Law of Cooling equation, Equation 2.5.1, gives the formula for

b) To find the temperature of the microprocessor 10 minutes after the device is turned off, plug in

c) To find the time when the temperature is 35 ◦C, rearrange the formula when

It takes 23 minutes for the microprocessor to cool down to 35 ◦C.

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=150

The dynamics of falling objects represent a classic example of how differential equations model real-world situations. This phenomenon is directly connected to Newton’s Second Law of Motion, which states that the force acting on an object is equal to the mass of the object times its acceleration.

In this equation, the force may depend on time (

Solving this equation yields

The simplest model of a falling object applies Newton’s Second Law by considering gravity as the only force acting on the object. Here, the force due to gravity is, leading to the differential equation

where is the acceleration due to gravity, and the mass is assumed to be constant. This model assumes no air resistance and that the gravitational field is uniform. The approximate value of

In reality, as an object falls, it encounters air resistance, which opposes the motion of the object. The net force on the object then becomes a combination of gravity and air resistance, modifying the equation to

where

The force of air resistance is often proportional to the velocity of the object and thus

As the object falls, air resistance increases with velocity until it balances the gravitational force. At this equilibrium point, the net force is zero, and the object no longer accelerates, reaching a constant velocity, known as terminal velocity.

Consider an object that has a mass of 25 kg and is initially moving downward with a velocity of -29 m/s. The object is falling through the atmosphere, which exerts a resistive force against its motion. This resistive force is proportional to the object’s velocity. Specifically, when the object’s velocity is 2 m/s, the resistive force is known to be 20 N. a) Write the differential equation that describes the motion of the object in terms of its velocity and time. b) Solve the differential equation to find the velocity of the object as a function of time,

Given information

a) Downward velocity is expressed as a negative value. Therefore, the upward direction is positive and the downward direction is negative.

Two primary forces acting on the object are gravity and air resistance. The force of gravity always acts downward, which we consider negative in our coordinate system, and is given by

On the other hand, air resistance acts in the opposite direction of the object’s motion, providing an upward force when the object is falling downward. This force is represented as

Combining these forces, the equation of motion is

we can use the information about the magnitude of air resistance to be

Plugging in the values with initial condition

b) This is a separable (and linear) differential equation. The general solution of the equation is

Applying the initial condition yields

c) The terminal velocity is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=150

Electrical circuits are integral to technological advancements, functioning based on the interplay of components such as resistors, inductors, and capacitors. In this section, we specifically discuss the application of first-order differential equations to analyze electrical circuits composed of a voltage source with either a resistor and inductor (RL) or a resistor and capacitor (RC), as illustrated in Fig. 2.5.1 Circuits containing both an inductor and a capacitor, known as RLC circuits, are governed by second-order differential equations, a topic we will revisit in the following chapter.

(a) (b)

(b)

Figure 2.5.1 (a) RL Series circuit and (b) RC series circuit

Kirchhoff’s laws—current law and voltage law—form the foundational principles governing electrical circuits. Kirchhoff’s current law states that the total current entering a junction must equal the total current leaving, implying that the algebraic sum of currents in a node is zero. Kirchhoff’s voltage law asserts that the algebraic sum of all voltages around any closed loop in a circuit must equal zero.

Kirchhoff’s current law implies that the same current passes through all elements in circuits in Figure 2.5.1. To apply Kirchhoff’s voltage law, understanding the voltage drop across each component is crucial:

a) Ohm’s law dictates that the voltage drop

b) Faraday’s law, complemented by Lenz’s law, describes that the voltage drop

c) The voltage drop

In this section, we derive the mathematical model for an RL circuit as shown in Figure 2.5.1, while the model derivation for an RC circuit is left as an exercise. Consider

where

or in the standard form

To solve this linear differential equation. we use an integrating factor

The general solution for the current

With specific

Consider an RL circuit with a resistor of

Given information:

a) Finding the current

The differential equation for an RL series circuit using Kirchhoff’s voltage law is

Plugging in the given values, we obtain

This is a first-order linear non-homogeneous differential equation.

Equation 2.5.3 gives the solution to this differential equation.

The right-hand side involves an integral with the exponential and sinusoidal terms that is typically solved using integration by parts. We only provide the final solution of the integral, leaving the detailed integration steps as an exercise for further exploration.

Which further simplifies to

Applying the initial condition yields

Therefore, the current is

b) Finding the voltage across the inductor

To find the voltage across the inductor, we first need to differentiate

Therefore, the voltage across the inductor is

c) Finding the voltage across the resistor

Similarly, the voltage across the resistor is found by

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=150

denote the quantity (kg) of salt at time

denote the quantity (kg) of salt at time  (min). a) Write a differential equation for

(min). a) Write a differential equation for  . b) Find the quantity

. b) Find the quantity  of salt in the tank at time

of salt in the tank at time  . c) Determine when the concentration of the salt in the tank will reach 0.1 kg/L. Round to the nearest minute.

. c) Determine when the concentration of the salt in the tank will reach 0.1 kg/L. Round to the nearest minute.a)

b)

c) 146 min

denote the temperature, in Celsius, at time

denote the temperature, in Celsius, at time  , in minutes. (a) Find the temperature

, in minutes. (a) Find the temperature  of the fluid for

of the fluid for  . (b) Find the temperature of the fluid 15 minutes after it is placed outside. Round your answer to two decimal places.

. (b) Find the temperature of the fluid 15 minutes after it is placed outside. Round your answer to two decimal places.a)

b)

has an initial downward velocity of

has an initial downward velocity of  . Assume that the atmosphere exerts a resistive force with a magnitude proportional to the speed. The resistance is

. Assume that the atmosphere exerts a resistive force with a magnitude proportional to the speed. The resistance is  when the velocity is

when the velocity is  . Use

. Use  . a) Write a differential equation in terms of the velocity

. a) Write a differential equation in terms of the velocity  , and acceleration

, and acceleration  . b) Find the velocity

. b) Find the velocity  of the object.

of the object.a)

b)

resistor and a

resistor and a  inductor is driven by the voltage

inductor is driven by the voltage  . If the initial resistor current is

. If the initial resistor current is  , find the current

, find the current  , the voltages across the inductor

, the voltages across the inductor  and the resistor

and the resistor  in terms of time

in terms of time  . Find the current

. Find the current  .

.III

This chapter discusses linear second-order differential equations, a fundamental class of equations in the study of mathematics, physics, and engineering. It explores their structure and techniques for solving them and discusses how they model real-world systems such as mechanical vibratory systems and electrical circuits.

3.1. Homogeneous Equations: This section discusses homogeneous linear second-order differential equations, where there is no external forcing function. The general solution involves finding two linearly independent solutions, which form the foundation of all possible solutions.

3.2 Constant Coefficient Equations: This section focuses on constant coefficient homogeneous equations.

3.3. Non-Homogeneous Equations: This section explores nonhomogeneous equations, which model systems influenced by external forces or inputs.

The chapter proceeds to introduce various methods for solving equations with variable coefficients and nonhomogeneous structures.

3.4 Method of Undetermined Coefficients: This method is effective for non-homogeneous equations with constant coefficients.

3.5 Variation of Parameters: A versatile technique for more general cases.

3.6 Reduction of Order: Useful for finding a second solution when one solution is already known.

3.7 Cauchy-Euler Equation: Specifically for equations with variable coefficients in a particular form.

The chapter concludes by applying these concepts to physical and engineering scenarios.

3.8 Mechanical Systems: This section examines the behavior of spring-mass systems, including free, forced, damped, and undamped vibrations.

3.9 Electrical Circuits: This section discusses the analysis of RLC circuits, which incorporate a resistor, inductor, and capacitor.

Elbert Frank Cox, born in 1895 in Evansville, Indiana, holds a monumental place in history as the first African-American to earn a Ph.D. in mathematics. Overcoming the pervasive racial barriers of his time, Cox’s unwavering determination led him to earn his doctoral degree from Cornell University in 1925. His groundbreaking dissertation, “The Polynomial Solutions of the Difference Equation,” laid the foundation for significant advancements in the field of differential equations. Cox’s academic journey was not just a personal achievement but a beacon of inspiration, symbolizing the potential for extraordinary accomplishment despite systemic obstacles. After earning his Ph.D., he dedicated his life to education, teaching at historically black colleges and universities and mentoring the next generation of mathematicians. Elbert Frank Cox’s legacy transcends his mathematical contributions; it is a testament to resilience and intellectual brilliance in the face of societal challenges, paving the way for future scholars of diverse backgrounds.

A linear second-order differential equation takes the form:

Here,

Unique Solution Theorem. If

Linear Combination Theorem. Suppose

The set of solutions,

Theorem on Linear Independence. If

Abel’s Theorem. If

Abel’s Theorem is a powerful tool for analyzing the solutions’ behavior across an interval, affirming that if the Wronskian is non-zero at one point and

Equivalence Theorem: For

is

is

is a fundamental set of solutions of the equation on

is a fundamental set of solutions of the equation on

is linearly independent on

is linearly independent on

is nonzero at some point in

is nonzero at some point in

is nonzero at all points in

is nonzero at all points in

With these foundational theorems, we have the necessary tools to start solving homogeneous linear second-order differential equations and prepare for the complexities of non-homogeneous cases.

Two solutions to the differential equation

a) Find the Wronskian of the solutions and determine if they are linearly independent.

b) Write the general solution to the differential equation.

c) Find the solution satisfying the initial conditions

a) To find Wronskian, we use Equation 3.1.3. We first need to find the first derivatives of the solutions

The Wronskian

b) Since the solutions are linearly independent, we can express the general solution to the differential equation as a combination of these solutions.

Here,

c) We apply the initial conditions to find constants

Applying the initial condition to

Applying the initial condition to

To determine

Solving the system yields

Therefore the solution to the initial value problem is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=167

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=167

and

and  . Determine if the functions are linearly independent for all real numbers.

. Determine if the functions are linearly independent for all real numbers.

are

are  ,

,  .

.  .

. a)

b)

are

are  ,

,  .

.  .

. a)

b)

We first consider the homogenous equation with constant coefficients:

To solve this, we recognize that a solution to this equation must have the property that its second derivative can be expressed as a linear combination of the first derivative and the function itself, suggesting that the solution form is

Since

Equation 3.2.2 is known as the auxiliary equation or characteristic equation (characteristic polynomial) of the homogeneous Equation 3.2.1. To determine the general solution of Equation 3.2.1, we solve for

The roots of the characteristic equation determine the nature of the solution, leading to three possible cases based on whether the roots are real and distinct, real and repeated, or complex conjugate.

The General Solution to the Second-Order Linear DE with Constant Coefficients

Case 1: Two Distinct Real Roots

If the characteristic equation (Equation 3.2.2) has two real roots

Case 2: Repeated Root

If the characteristic equation has a repeated root

Case 3: Complex Conjugate Roots

If the characteristic equation has complex conjugate roots of the form

In this form,

Find the general solution to the differential equation

The auxiliary equation is

The equation is factorable to

The roots are

Solve the following initial value problem (IVP).

Finding the general solution:

The auxiliary equation is

The equation is factorable to

The roots are

Applying the initial conditions:

Applying the initial condition to

Applying the initial condition to

To determine

Solving the system yields

Therefore the solution to the initial value problem is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=169

Find the general solution to the differential equation

The auxiliary equation is

The equation is factorable to

The equation has a repeated root

Solve the following initial value problem (IVP).

Finding the general solution:

The auxiliary equation is

The equation is factorable to

The equation has a repeated root

Applying the initial conditions:

Applying the initial condition to

Applying the initial condition to

Plugging in

Therefore the solution to the initial value problem is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=169

Find the general solution to the differential equation

The auxiliary equation is

Using the quadratic formula, we obtain

The equation has complex conjugate roots with a real part

Solve the following initial value problem (IVP).

Finding the general solution:

The auxiliary equation is

Alternative to using the quadratic formula that we used in the previous example, we can find the roots by completing the square. For variety, we use completing the square this time.

The equation has complex conjugate roots with a real part

Applying the initial conditions:

Applying the initial condition to

Applying the initial condition to

Plugging in

Therefore the solution to the initial value problem is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=169

In this section, we explore the nonhomogeneous linear second-order differential equation of the form:

Uniqueness Theorem. If

To solve Equation 3.3.1, we first need the solutions to the associated homogeneous equation

We refer to Equation 3.3.2 as the complementary equation for Equation 3.3.1.

General Solution Theorem.

Here

The superposition principle is a powerful tool that allows us to simplify solving nonhomogeneous equations. It works by dividing the forcing function into simpler components, finding a particular solution for each component, and then adding those solutions together to form a complete solution to the original equation.

Theorem. If

and

Then for any constants

Given

Looking at the right-hand side of the equations, we notice that

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=172

is a particular solution to

is a particular solution to  , and

, and  is a particular solution to

is a particular solution to  , use the method of superposition to find a particular solution to

, use the method of superposition to find a particular solution to

is a particular solution to

is a particular solution to  , and

, and  is a particular solution to

is a particular solution to  , use the principle of superposition to find a particular solution to

, use the principle of superposition to find a particular solution to

The method of undetermined coefficients is a technique for finding particular solutions,

To apply this method, we first identify the form of the forcing function

Polynomial Forcing Functions: For

Exponential Forcing Functions: For

Adjusting the Guess Based on Complementary Equation Solutions: If the complementary equation has a solution matching part of

Note that we use

Find the general solution to the following equation.

Finding the complementary solution:

While it’s not necessary to know the complementary solution to find the particular solution, knowing it is beneficial. Understanding the complementary solution helps us make better initial guesses for the particular solution and adjust them accordingly before we proceed with the algebra needed to determine the undetermined coefficients.

The auxiliary equation associated with the complementary equation is

Guessing the form of the particular solution:

Since

Plugging in the guess into the equation to find A:

Next, we plug in the guess and its derivatives into the differential equation to determine the undetermined coefficient A.

Therefore, the particular solution to the differential equation is

Finding the general solution:

The general solution of a nonhomogenous equation is

where

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=174

Find the general solution to the following equation.

Finding the complementary solution:

The complementary equation is similar to the one in Example 3.4.2. Thus,

Guessing the form of the particular solution:

Our initial guess is

Plugging in the guess into the equation to find A:

Next, we plug in the guess and its derivatives into the differential equation to determine the undetermined coefficient A.

Factoring the exponential term and collecting the like terms yields

Therefore, the particular solution to the differential equation is

Finding the general solution:

The general solution is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=174

Solve the following initial value problem.

Finding the general solution:

The equation is similar to the one in Example 3.4.3. Therefore, the general solution is

Applying the initial conditions:

Applying the initial condition to

Applying the initial condition to

Plugging in

Therefore, the solution to the initial value problem is

Note that the initial conditions must satisfy the entire solution of the nonhomogeneous equation, not just the complementary part. Therefore, we apply the initial conditions directly to the general solution of the given nonhomogeneous equation to determine the constants.

The following section summarizes the appropriate forms of guesses for various types of forcing functions and explains how to modify these guesses if any part of the forcing function

)

)To find a particular solution to the differential equation

|  Guess Guess |

degree polynomial degree polynomial |  |

|  |

|  |

|  |

|  |

Remarks

1. Exponential and Polynomial Products: If

2. Complex Roots: If

3. Exponential and Trigonometric/Polynomial Products: If

4. Polynomial and Trigonometric Products: If

Find the form of a particular solution to

where

a)

The auxiliary equation associated with the equation is

a)

b) This function contains the product of polynomials (second degree) and trig functions. Using Remark 4, first, we guess the polynomial and multiply it by the proper cosine. We then add it to the product of another guessed polynomial with different coefficients and a sine.

c) This function contains the product of exponential, polynomial (first degree), and trig functions. Using Remarks 3 and 4, first, we guess the polynomial and multiply it by the proper cosine. We then add it to the product of another guessed polynomial with different coefficients and a sine. Finally, we multiply the exponential part.

d) Since

e) This function contains the product of exponential and polynomial (second degree). Using Remark 3, first, we guess the polynomial and multiply the exponential part. The polynomial guess will be

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=174

The method of variation of parameters is another technique used to find particular solutions to nonhomogeneous linear differential equations. It is especially useful for equations with both constant and variable coefficients and is applicable when the forcing function,

Unlike the method of undetermined coefficients where the complementary solution aids in guessing the form of the particular solution, variation of parameters requires the complementary solution to determine the particular solution.

We first focus on applying the method of variation of parameters to nonhomogeneous constant-coefficient equations. Consider the nonhomogeneous linear second-order equation

Let

We aim to substitute

Since we have more parameters than we have equations, we impose that

We then find

After substituting

The expressions multiplied by

Combining (i) and (ii) yields a system of equations

Solving the system for

Notice that the term in the parenthesis in the denominator is the Wronskian (

To find a particular solution to Equation 3.5.1,

1. Find a Solution to the Homogeneous Equation: Determine a fundamental set of solutions

2. Determine

3. Construct the Particular Solution: Combine

Find a particular solution to

To find a particular solution using the method of variation of parameters, we should first find a solution to the associated homogeneous equation:

1. The characteristic polynomial of the complementary equation

So the solution is a repeated root

The Wronskian of the fundamental set is

2. Next substituting

Finding

This integral can be evaluated using the technique of integration by parts.

Finding

This integral can be evaluated using the technique of integration by parts.

Since we only need one particular solution, we set the constant of integrations to zero in

3. We substitute

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=176

Find (a) a particular and then (b) a general solution to

a) To find a general solution, we first need to find a particular solution. To find a particular solution using the variation of parameters method, we should first find a set of fundamental solutions to the associated homogenous equation:

1. The characteristic polynomial of the complementary equation

So the solutions are

2. Next we find

and

Since we only need one particular solution, we take both constants of integration as zero for simplicity.

3. We substitute

b) To find a general solution we add the general solution to the homogeneous equation and a particular solution:

Notice that the terms

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=176

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=176

Having discussed solving homogeneous and nonhomogeneous second-order differential equations with constant coefficients, we now turn our attention to equations where the coefficients are functions of the independent variable. The method of variation of parameters is suitable for such equations.

For a differential equation of the form

valid solutions are expected on an open interval where all four governing functions,

Existence and Uniqueness Theorem: If

The methodological steps for variable-coefficient equations mirror those for constant coefficients except the equation should be in the standard form.

Method of Variation of Parameters for Variable-Coefficient Equations

1. Standardize the equation: Divide the equation by the coefficient of

2. Linearly Independent Solutions: Find two linearly independent solutions,

3. Determine

4. Construct the Particular Solution: Combine

Find a particular solution to the following differential equation given

1. First, divide the equation by the coefficient of

2. To find a particular solution using the method of variation of parameters, we need a fundamental set of solutions to the associated homogeneous equation. the provided solutions

The Wronskian of the solution set is

The Wronskian is never zero. Therefore, the solution set is the fundamental solution set.

3. Next substituting

Finding

Letting

Finding

Letting

4. Substitute

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=176

.

. or for variable-coefficient equations.

or for variable-coefficient equations.

and

and  satisfy the corresponding homogeneous equation.

satisfy the corresponding homogeneous equation.

The method of Reduction of Order is a technique for finding a second solution to a second-order linear differential equation when one solution is already known. It is useful for both homogeneous and nonhomogeneous equations.

Generally, to apply the reduction of order method for the nonhomogeneous equation

we assume the second solution

We can then solve this first-order differential equation using standard techniques, integrate it to find

For a homogeneous equation with a known solution

1. Standardize the equation: Divide the equation by the coefficient of

2. Determine

3. Find the second Solution

4. Form the General Solution: The general solution is then a combination of both solutions.

Note that constant

Given

1. First, standardize the equation by dividing it by the coefficient of

2. Identify

3. The second solution is given by

We are looking for the simplest

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=178

While primarily detailed for homogeneous equations, its principles apply to nonhomogeneous situations by initially solving the associated homogeneous equation and then finding a particular solution using the standard methods discussed for nonhomogeneous equations.

Given

a. Finding the second solution of the complementary equation:

We follow the steps for the reduction of orders method to find the second linearly independent solution to the complementary equation.

1a. First, standardize the equation by dividing it by the coefficient of

2a. Identify

3a. The second solution is given by

4a. The general solution to the complementary equation is

Constant

b. Finding a particular solution of the nonhomogeneous equation:

We use the method of variation of parameters to find the particular solution

1b. Standardize the original differential equation.

2b. The solutions to the homogeneous equation are now known:

The Wronskian of the fundamental set is

3b. Next substituting

Finding

Finding

4b. Substitute

c. Finding the General Solution

The general solution to the nonhomogeneous equation is the sum of the particular solution and complementary solution.

d. Applying the initial conditions

Applying the initial condition to

Applying the initial condition to

To determine

Solving the system yields

Therefore the solution to the initial value problem is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=178

is a solution to the given equation, use the Method of Reduction of Order to find a second solution.

is a solution to the given equation, use the Method of Reduction of Order to find a second solution.

satisfies the complementary equation.

satisfies the complementary equation.

satisfies the complementary equation.

satisfies the complementary equation.

The Cauchy-Euler equation, also known as the Euler-Cauchy equation or simply Euler’s equation, is a type of second-order linear differential equation with variable coefficients that appear in many applications in physics and engineering. These equations are particularly noteworthy because they have variable coefficients that are powers of the independent variable.

A second-order Cauchy-Euler equation is generally of the form:

Here

To solve a homogeneous Cauchy-Euler Equation 3.7.1,

1. Substitute and Transform: Let

which yields the characteristic equation.

2. Solve the Characteristic Equation: Similar to the equations with constant coefficients, we solve the quadratic equation for

Case 1: Two Distinct Real Roots

The general solution will be the linear combination of

Case 2: Repeated Root

The general solution will be the linear combination of

Case 3: Complex Conjugate Roots

The general solution will be the linear combination of

Solve the initial value problem

The equation is Cauchy-Euler.

1. So first we find its characteristic polynomial given

The equation has a repeated root

2. Therefore, the general solution of the equation is

3. We use the initial values to find

Therefore, the solution to the IVP is

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=180

For a nonhomogeneous Cauchy-Euler equation, the method of variation of parameters or undetermined coefficients (if applicable) is used.

As we progress from first-order to second-order ordinary differential equations, we encounter a variety of applications that can be modeled by these higher-order equations. In this section and next, we focus on mechanical vibrations and electrical circuits (RLC circuits) as two primary areas where second-order differential equations are extensively applied. These areas are fundamental in engineering and physics, providing rich contexts for understanding dynamic system behavior.

Studying mechanical vibrations is crucial for designing and analyzing systems that experience oscillatory motion. Understanding vibrations helps engineers reduce noise, prevent catastrophic failure due to resonance, and optimize the performance of various mechanical systems ranging from buildings and bridges to vehicle suspensions and electronic components. Modeling these systems allows engineers to predict responses to various stimuli, ensuring safety and functionality.

To model a vibratory system, we often use a simplified representation involving masses, springs, and dampers. These elements capture the essential dynamics of more complex real-world systems. Using Newton’s laws of motion or energy methods, we develop a mathematical model that typically results in a second-order differential equation.

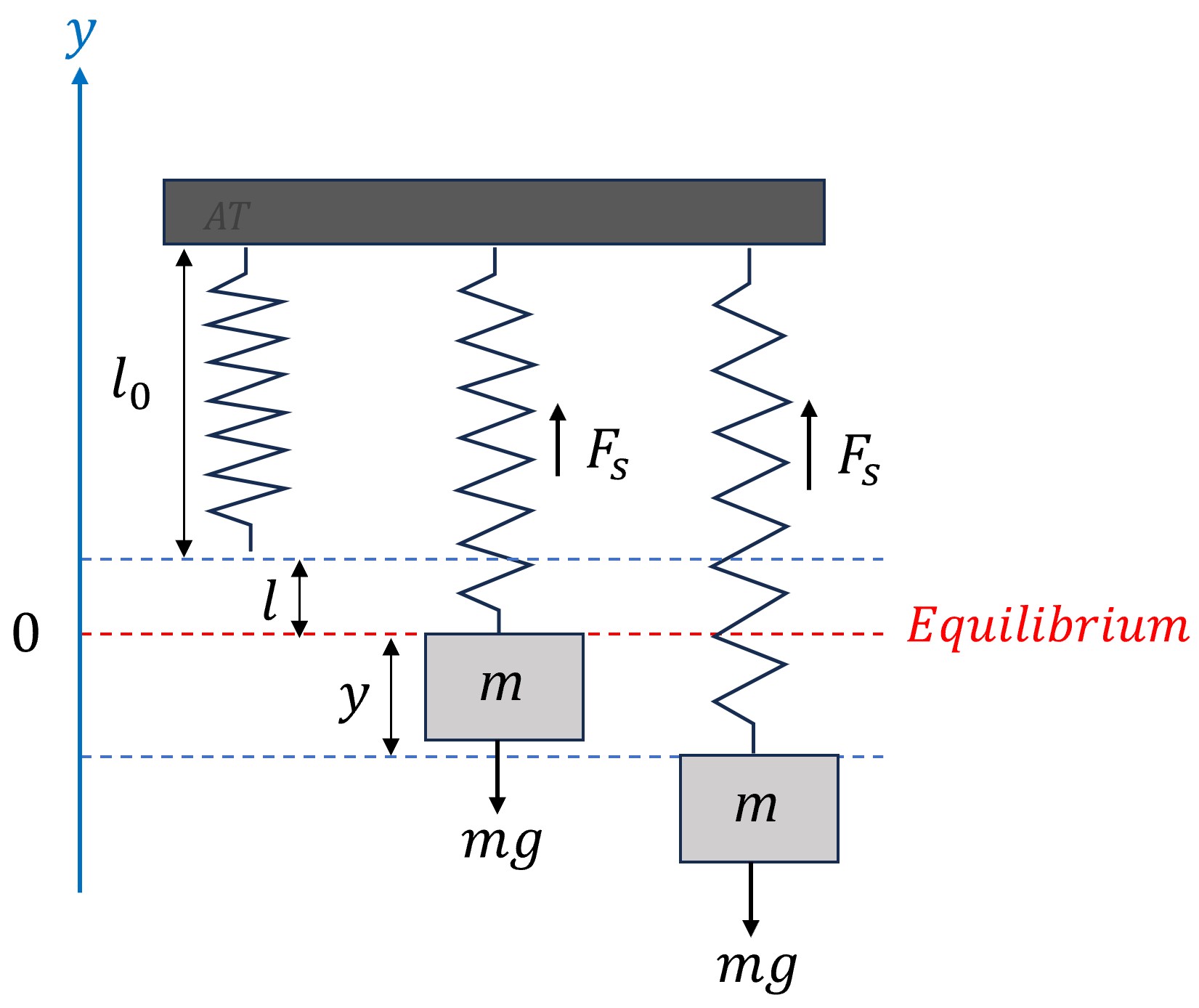

This system consists of a mass, typically denoted as

when unstretched. When we attach a mass

when unstretched. When we attach a mass  to the spring, it stretches by a length

to the spring, it stretches by a length  . This point where the mass comes to rest and the spring ceases to stretch further is known as the equilibrium position. At this point, the system is stable, and the mass hangs motionless until disturbed. In this system, we define

. This point where the mass comes to rest and the spring ceases to stretch further is known as the equilibrium position. At this point, the system is stable, and the mass hangs motionless until disturbed. In this system, we define  as the displacement of the mass from its equilibrium position, where positive values indicate upward movement.

as the displacement of the mass from its equilibrium position, where positive values indicate upward movement.

To derive the equation governing the motion of a spring-mass-damper system, we apply Newton’s second law of motion, which relates the net force acting on the mass to its acceleration. The primary forces acting on the mass in a spring-mass system include:

acting downward.

acting downward. , where

, where  is the spring constant. This force is governed by Hooke’s law and is typically proportional to the displacement from the spring’s natural length (

is the spring constant. This force is governed by Hooke’s law and is typically proportional to the displacement from the spring’s natural length ( ) and opposite in direction.

) and opposite in direction. , where

, where  is the damping coefficient. If present, the damping force is proportional to the velocity of the mass and acting in the opposite direction of motion.

is the damping coefficient. If present, the damping force is proportional to the velocity of the mass and acting in the opposite direction of motion. . It includes any external force acting on the system, which might be periodic or random, leading to forced vibrations.

. It includes any external force acting on the system, which might be periodic or random, leading to forced vibrations.According to Newton’s second law,

Substituting all the forces and writing acceleration as the second derivative of displacement yields

At equilibrium, the sum of all forces acting on the mass equals zero. Therefore,

Simplify the equation by incorporating

Here,

Depending on which forces act on the system, there are several special cases:

): The simplest form of vibration occurs when there is no damping and no external force. The system oscillates at its natural frequency, determined by the mass and spring constant.

): The simplest form of vibration occurs when there is no damping and no external force. The system oscillates at its natural frequency, determined by the mass and spring constant. ): When damping is present but there is no external force, the system experiences damped vibrations leading to a gradual decrease in oscillation amplitude over time. The nature of the damping (underdamped, critically damped, or overdamped) depends on the values of

): When damping is present but there is no external force, the system experiences damped vibrations leading to a gradual decrease in oscillation amplitude over time. The nature of the damping (underdamped, critically damped, or overdamped) depends on the values of  ,

,  , and

, and  .

. ): When an external force acts on the system, the system experiences forced vibrations. If the frequency of the external force is close to the system’s natural frequency, resonance can occur, leading to large amplitude oscillations.

): When an external force acts on the system, the system experiences forced vibrations. If the frequency of the external force is close to the system’s natural frequency, resonance can occur, leading to large amplitude oscillations. ): This is the most general case, combining the effects of damping and external forcing, leading to complex oscillatory behavior.

): This is the most general case, combining the effects of damping and external forcing, leading to complex oscillatory behavior.The simplest form of vibration occurs when there is no damping (

This equation is a homogeneous second-order linear differential equation. By solving the characteristic equation

The term

It is often convenient to represent the displacement in the amplitude-phase form with a single trigonometric function

Here

The motion described by Equation 3.8.4 is known as simple harmonic motion, characterized by its sinusoidal nature and constant frequency. The period of the motion is

is typically given as

is typically given as  , and lengths should be in meters with mass in kilograms. In the Imperial system,

, and lengths should be in meters with mass in kilograms. In the Imperial system,  is about

is about  , with lengths in feet and mass in slugs.

, with lengths in feet and mass in slugs. in the interval

in the interval  ensures a unique solution within one complete cycle. The signs of

ensures a unique solution within one complete cycle. The signs of  and

and  determine the quadrant in which

determine the quadrant in which  lies.

lies.  ,

,  is in the first quadrant

is in the first quadrant ,

,  is in the second quadrant.

is in the second quadrant. ,

,  is in the third quadrant.

is in the third quadrant. ,

,  is in the fourth quadrant.

is in the fourth quadrant.

A 150 cm long vertical spring hangs from a fixed ceiling. A 2-kg object is attached to the lower end of the spring, and the length of the spring becomes 155 cm where the object is in equilibrium. The object is then pulled down an additional 3 cm and released with an initial upward velocity of 20 cm/s. Assuming no damping and no external forces other than gravity are acting on the system:

a) Find the displacement of the object as a function of time.

b) Determine the natural frequency, period, amplitude, and phase angle of the motion.

c) Rewrite the equation of motion in the amplitude-phase form

Express your answers in the cgs unit where

Given information:

a)

Calculating the spring constant

At equilibrium, the forces acting on the object are balanced, meaning

Calculating the natural frequency:

The natural frequency is

It is important to note that to find

Finding the general solution:

Given there is no damping force and an external force, the initial value problem is

The general solution to this equation is given by Equation 3.8.3.

Applying the initial conditions:

The equation of the object displacement is then

b)

Natural frequency:

Period:

Amplitude

Phase angle:

The reference phase angle is determined by

Since

c) The equation of motion can be written as

The graph of the displacement is shown for the first 7 seconds.

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=182

In free, damped vibration, there is no external force (

This equation is a homogeneous second-order linear differential equation. By solving the characteristic equation

Depending on the discriminant

1. Critically damped (

In this case, there is a repeated root

The motion in this case is said to be critically damped as the damping is just enough to prevent oscillation. This level of damping is achieved when the damping coefficient

It is important to note that as time progresses (

2. Overdamped (

In this case, there are two real distinct roots

Given both

3. Underdamped (

In this case, the roots of the characteristic equation are complex conjugates given by

Thus the solution to the differential Equation 3.8.5 is

The term

Here again

An underdamped system is characterized by a damping coefficient

Such behavior is often preferred in various applications. In musical instruments, for example, the underdamped vibrations of strings or membranes contribute to a sustained, resonant sound. Similarly, seismic dampers in buildings employ a controlled underdamped response to safely dissipate energy from earthquakes, allowing structures to sway and reduce stress without collapsing.

A 1-kg mass is attached to a string with a stiffness of 64 N/m and a dashpot with a damping constant 16 N.s/m. The object is compressed 20 cm above its equilibrium and released with an initial upward velocity of 2 m/s. Find the displacement of the object as a function of time.

Given information:

The initial value problem for this system is

Before solving the IVP, we can calculate the critical damping coefficient to determine the type of damping.

The damping coefficient equals the critical damping coefficient (

Finding the general solution:

The general solution for a critically damped system is given by Equation 3.8.6.

Applying the initial conditions:

The equation of the object’s displacement is then

The graph of the displacement is shown for the first 3 seconds. As expected, the system smoothly and quickly returns to its equilibrium position without oscillation.

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=182

Find the displacement of the object in Example 3.8.2, if the spring is now attached to a dashpot with a damping constant 34 N.s/m.

Given information:

The initial value problem for this system is

In the previous example, we determined the critical damping coefficient to be

Finding the general solution:

The characteristic equation for the differential equation has two distinct real roots.

The general solution to an overdamped system is given by Equation 3.8.7.

Applying the initial conditions:

Solving the system for constants

The equation of the object’s displacement is then

The below graph displays the displacement for the first 3 seconds. It confirms that the system gradually returns to its equilibrium position smoothly and without any oscillation. When compared to the critically damped system in Example 3.8.2, this overdamped system takes a longer time to settle down. This slower behavior underscores that the increased damping force in the overdamped system delays the return to equilibrium.

One or more interactive elements has been excluded from this version of the text. You can view them online here: https://ecampusontario.pressbooks.pub/diffeq/?p=182

Find the displacement of the object in Example 3.8.2, if the spring is now attached to a dashpot with a damping constant 4 N.s/m.

Given information:

The initial value problem for this system is

In Example 3.8.2, we determined the critical damping coefficient to be

Finding the general solution:

The characteristic equation for the differential equation has complex conjugates.

The general solution to an underdamped system is given by Equation 3.8.8.

Applying the initial conditions:

The equation of the object’s displacement is then

The amplitude-phase form of the equation is

The graph illustrates the displacement of the system over the initial 3 seconds. This underdamped system lacks enough damping to stop oscillations, leading to a pattern of diminishing swings around the equilibrium position. These oscillations decrease in amplitude for approximately 2 seconds before the system finally settles at the equilibrium position.