P01: Perception and Attention Lab

Sevda Montakhaby Nodeh

Perception and Attention Lab

As a cognitive researcher at the Cognition and Attention Lab at McMaster University, you are at the forefront of exploring the intricacies of proactive control within attention processes. This line of research is profoundly significant, given that the human sensory system is overwhelmed with a vast array of information at any given second, exceeding what can be processed meaningfully. The essence of research in attention is to decipher the mechanisms through which sensory input is selectively navigated and managed. This is particularly crucial in understanding how individuals anticipate and adjust in preparation for upcoming tasks or stimuli, a phenomenon especially pertinent in environments brimming with potential distractions.

In everyday life, attentional conflicts are commonplace, manifesting when goal-irrelevant information competes with goal-relevant data for attentional precedence. An example of this (one that I’m sure we are all too familiar with) is the disruption from notifications on our mobile devices, which can divert us from achieving our primary goals, such as studying or driving. From a scientific standpoint, unravelling the strategies employed by the human cognitive system to optimize the selection and sustain goal-directed behaviour represents a formidable and compelling challenge.

Your ongoing research is a direct response to this challenge, delving into how proactive control influences the ability to concentrate on task-relevant information while effectively sidelining distractions. This facet of cognitive functionality is not just a theoretical construct; it’s the very foundation of daily human behaviour and interaction.

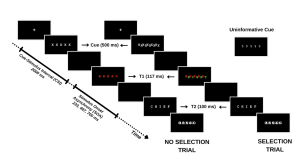

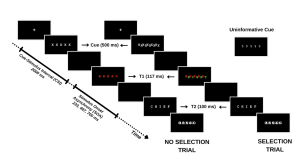

Your study methodically assesses this dynamic by engaging participants in a task where they must identify a sequence of words under varying conditions. The first word (T1) is presented in red, followed rapidly by a second word (T2) in white. The interval between the appearance of T1 and T2, known as the stimulus onset asynchrony (SOA), serves as a critical measure in your experiment. The distinctiveness of your study emerges in the way you manipulate the selective attention demands in each trial, classified into:

- No-Selection Trials: T1 appears alone, typically leading to superior identification accuracy for T1 and T2 due to a diminished cognitive load.

- Selection Trials: T1 is interspersed with a green distractor word. In these more demanding trials, the green distractor competes for attention with the red target word, resulting in decreased identification accuracy for both T1 and T2.

By introducing informative and uninformative cues, your investigation probes the role of proactive control. Informative cues give participants a preview of the upcoming trial type, allowing them to prepare mentally for the impending challenge. Conversely, uninformative cues act as control trials and offer no insight into the trial type. The hypothesis posits that such informative cues enable participants to proactively fine-tune their attentional focus in preparation for attentional conflict, potentially enhancing performance on selection trials with informative cues compared to those with uninformative cues.

For an overview of the different trial types please refer to the figure included below.

In this designed study, you are not only tackling the broader question of the role of conscious effort in attention but also contributing to a nuanced understanding of human cognitive processes, paving the way for applications that span from enhancing daily life productivity to optimizing technology interfaces for minimal cognitive disruption.

Getting Started: Loading Libraries, setting the working directory, and loading the dataset

Let’s begin by running the following code in RStudio to load the required libraries. Make sure to read through the comments embedded throughout the code to understand what each line of code is doing.

Note: Shaded boxes hold the R code, with the “#” sign indicating a comment that won’t execute in RStudio.

# Here we create a list called "my_packages" with all of our required libraries

my_packages <- c("tidyverse", "rstatix", "readxl", "xlsx", "emmeans", "afex",

"kableExtra", "grid", "gridExtra", "superb", "ggpubr", "lsmeans")

# Checking and extracting packages that are not already installed

not_installed <- my_packages[!(my_packages %in% installed.packages()[ , "Package"])]

# Install packages that are not already installed

if(length(not_installed)) install.packages(not_installed)

# Loading the required libraries

library(tidyverse) # for data manipulation

library(rstatix) # for statistical analyses

library(readxl) # to read excel files

library(xlsx) # to create excel files

library(kableExtra) # formatting html ANOVA tables

library(superb) # production of summary stat with adjusted error bars(Cousineau, Goulet, & Harding, 2021)

library(ggpubr) # for making plots

library(grid) # for plots

library(gridExtra) # for arranging multiple ggplots for extraction

library(lsmeans) # for pairwise comparisons

Make sure to have the required dataset (“ProactiveControlCueing.xlsx“) for this exercise downloaded. Set the working directory of your current R session to the folder with the downloaded dataset. You may do this manually in R studio by clicking on the “Session” tab at the top of the screen, and then clicking on “Set Working Directory”.

If the downloaded dataset file and your R session are within the same file, you may choose the option of setting your working directory to the “source file location” (the location where your current R session is saved). If they are in different folders then click on “choose directory” option and browse for the location of the downloaded dataset.

You may also do this by running the following code:

Once you have set your working directory either manually or by code, in the Console below you will see the full directory of your folder as the output.

Read in the downloaded dataset as “cueingData” and complete the accompanying exercises to the best of your abilities.

# Read xlsx file

cueingData = read_excel("ProactiveControlCueing.xlsx")

Files to download:

- ProactiveControlCueing.xlsx

An interactive H5P element has been excluded from this version of the text. You can view it online here:

https://ecampusontario.pressbooks.pub/radpnb/?p=22#h5p-1

Answer Key

Exercise 1: Data Preparation and Exploration

Note: Shaded boxes hold the R code, while the white boxes display the code’s output, just as it appears in RStudio.

The “#” sign indicates a comment that won’t execute in RStudio.

1. Display the first few rows to understand your dataset.

head(cueingData) #Displaying the first few rows

## # A tibble: 6 × 6

## ID CUE_TYPE TRIAL_TYPE SOA T1Score T2Score

## <dbl> <chr> <chr> <dbl> <dbl> <dbl>

## 1 1 INFORMATIVE NS 233 100 94.6

## 2 2 INFORMATIVE NS 233 100 97.2

## 3 3 INFORMATIVE NS 233 89.2 93.9

## 4 4 INFORMATIVE NS 233 100 91.9

## 5 5 INFORMATIVE NS 233 100 100

## 6 6 INFORMATIVE NS 233 100 97.3

2. Set up your factors and check for structure. Make sure your dependent measures are in numerical format, and that your factors and levels are set up correctly.

cueingData <- cueingData %>%

convert_as_factor(ID, CUE_TYPE, TRIAL_TYPE, SOA) #setting up factors

str(cueingData) #checking that factors and levels are set-up correctly. Checking to see that dependent measures are in numerical format.

## # A tibble: 6 × 6

## ID CUE_TYPE TRIAL_TYPE SOA T1Score T2Score

## <dbl> <chr> <chr> <dbl> <dbl> <dbl>

## 1 1 INFORMATIVE NS 233 100 94.6

## 2 2 INFORMATIVE NS 233 100 97.2

## 3 3 INFORMATIVE NS 233 89.2 93.9

## 4 4 INFORMATIVE NS 233 100 91.9

## 5 5 INFORMATIVE NS 233 100 100

## 6 6 INFORMATIVE NS 233 100 97.3

## tibble [192 × 6] (S3: tbl_df/tbl/data.frame)

## $ ID : Factor w/ 16 levels "1","2","3","4",..: 1 2 3 4 5 6 7 8 9 10 ...

## $ CUE_TYPE : Factor w/ 2 levels "INFORMATIVE",..: 1 1 1 1 1 1 1 1 1 1 ...

## $ TRIAL_TYPE: Factor w/ 2 levels "NS","S": 1 1 1 1 1 1 1 1 1 1 ...

## $ SOA : Factor w/ 3 levels "233","467","700": 1 1 1 1 1 1 1 1 1 1 ...

## $ T1Score : num [1:192] 100 100 89.2 100 100 ...

## $ T2Score : num [1:192] 94.6 97.2 93.9 91.9 100 ...

3. Perform basic data checks for missing values and data consistency.

sum(is.na(cueingData)) # Checking for missing values in the dataset

summary(cueingData) # Viewing the summary of the dataset to check for inconsistencies

## ID CUE_TYPE TRIAL_TYPE SOA T1Score

## 1 : 12 INFORMATIVE :96 NS:96 233:64 Min. : 32.43

## 2 : 12 UNINFORMATIVE:96 S :96 467:64 1st Qu.: 77.78

## 3 : 12 700:64 Median : 95.87

## 4 : 12 Mean : 86.33

## 5 : 12 3rd Qu.:100.00

## 6 : 12 Max. :100.00

## (Other):120

## T2Score

## Min. : 29.63

## 1st Qu.: 83.97

## Median : 95.76

## Mean : 87.84

## 3rd Qu.:100.00

## Max. :100.00

- Is your data a balanced or an unbalanced design? (Hint: Use code to show the number of observations per combination of factors)

table(cueingData$CUE_TYPE, cueingData$TRIAL_TYPE, cueingData$SOA) #checking the number of observations per condition or combination of factors. Data is a balanced design since there is an equal number of observations per cell.

## , , = 233

##

##

## NS S

## INFORMATIVE 16 16

## UNINFORMATIVE 16 16

##

## , , = 467

##

##

## NS S

## INFORMATIVE 16 16

## UNINFORMATIVE 16 16

##

## , , = 700

##

##

## NS S

## INFORMATIVE 16 16

## UNINFORMATIVE 16 16

Exercise 2: Computing Summary Statistics

5. The Superb library requires your dataset to be in a wide format. So convert your dataset from a long to a wide format. Save it as “cueingData.wide”.

cueingData.wide <- cueingData %>%

pivot_wider(names_from = c(TRIAL_TYPE, SOA, CUE_TYPE),

values_from = c(T1Score, T2Score) )

6. Using superbPlot() and cueingData.wide calculate the mean, and standard error of the mean (SEM) measure for T1 and T2 scores at each level of the factors. Make sure to calculate Cousineau-Morey corrected SEM values.

- You must do this separately for each of your dependent measures. Save your superbplot function for T1Score as “EXP1.T1.plot” and as “EXP1.T2.plot” for T2Score.

- Re-name the levels of the factors in each plot. Currently, the levels are numbered. We want the levels of SOA to be 233, 467, and 700; the levels of cue-type as Informative and Uninformative, and the levels of trial-type as Selection and No Selection (Hint: to access the summary data use EXP1.T1.plotinsertfactorname).

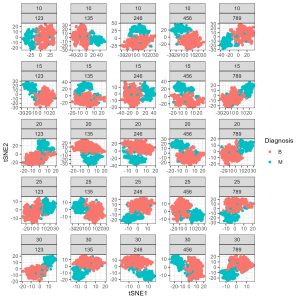

EXP1.T1.plot <- superbPlot(cueingData.wide,

WSFactors = c("SOA(3)", "CueType(2)", "TrialType(2)"),

variables = c("T1Score_NS_233_INFORMATIVE", "T1Score_NS_467_INFORMATIVE",

"T1Score_NS_700_INFORMATIVE", "T1Score_NS_233_UNINFORMATIVE",

"T1Score_NS_467_UNINFORMATIVE", "T1Score_NS_700_UNINFORMATIVE",

"T1Score_S_233_INFORMATIVE", "T1Score_S_467_INFORMATIVE",

"T1Score_S_700_INFORMATIVE", "T1Score_S_233_UNINFORMATIVE",

"T1Score_S_467_UNINFORMATIVE", "T1Score_S_700_UNINFORMATIVE"),

statistic = "mean",

errorbar = "SE",

adjustments = list(

purpose = "difference",

decorrelation = "CM",

popSize = 32

),

plotStyle = "line",

factorOrder = c("SOA", "CueType", "TrialType"),

lineParams = list(size=1, linetype="dashed"),

pointParams = list(size = 3))

## superb::FYI: Here is how the within-subject variables are understood:

## SOA CueType TrialType variable

## 1 1 1 T1Score_NS_233_INFORMATIVE

## 2 1 1 T1Score_NS_467_INFORMATIVE

## 3 1 1 T1Score_NS_700_INFORMATIVE

## 1 2 1 T1Score_NS_233_UNINFORMATIVE

## 2 2 1 T1Score_NS_467_UNINFORMATIVE

## 3 2 1 T1Score_NS_700_UNINFORMATIVE

## 1 1 2 T1Score_S_233_INFORMATIVE

## 2 1 2 T1Score_S_467_INFORMATIVE

## 3 1 2 T1Score_S_700_INFORMATIVE

## 1 2 2 T1Score_S_233_UNINFORMATIVE

## 2 2 2 T1Score_S_467_UNINFORMATIVE

## 3 2 2 T1Score_S_700_UNINFORMATIVE

## superb::FYI: The HyunhFeldtEpsilon measure of sphericity per group are 0.134

## superb::FYI: Some of the groups' data are not spherical. Use error bars with caution.

EXP1.T2.plot <- superbPlot(cueingData.wide,

WSFactors = c("SOA(3)", "CueType(2)", "TrialType(2)"),

variables = c("T2Score_NS_233_INFORMATIVE", "T2Score_NS_467_INFORMATIVE",

"T2Score_NS_700_INFORMATIVE", "T2Score_NS_233_UNINFORMATIVE",

"T2Score_NS_467_UNINFORMATIVE", "T2Score_NS_700_UNINFORMATIVE",

"T2Score_S_233_INFORMATIVE", "T2Score_S_467_INFORMATIVE",

"T2Score_S_700_INFORMATIVE", "T2Score_S_233_UNINFORMATIVE",

"T2Score_S_467_UNINFORMATIVE", "T2Score_S_700_UNINFORMATIVE"),

statistic = "mean",

errorbar = "SE",

adjustments = list(

purpose = "difference",

decorrelation = "CM",

popSize = 32

),

plotStyle = "line",

factorOrder = c("SOA", "CueType", "TrialType"),

lineParams = list(size=1, linetype="dashed"),

pointParams = list(size = 3)

)

## superb::FYI: Here is how the within-subject variables are understood:

## SOA CueType TrialType variable

## 1 1 1 T2Score_NS_233_INFORMATIVE

## 2 1 1 T2Score_NS_467_INFORMATIVE

## 3 1 1 T2Score_NS_700_INFORMATIVE

## 1 2 1 T2Score_NS_233_UNINFORMATIVE

## 2 2 1 T2Score_NS_467_UNINFORMATIVE

## 3 2 1 T2Score_NS_700_UNINFORMATIVE

## 1 1 2 T2Score_S_233_INFORMATIVE

## 2 1 2 T2Score_S_467_INFORMATIVE

## 3 1 2 T2Score_S_700_INFORMATIVE

## 1 2 2 T2Score_S_233_UNINFORMATIVE

## 2 2 2 T2Score_S_467_UNINFORMATIVE

## 3 2 2 T2Score_S_700_UNINFORMATIVE

## superb::FYI: The HyunhFeldtEpsilon measure of sphericity per group are 0.226

## superb::FYI: Some of the groups' data are not spherical. Use error bars with caution.

# Re-naming levels of the factors

levels(EXP1.T1.plot$data$SOA) <- c("1" = "233", "2" = "467", "3" = "700")

levels(EXP1.T2.plot$data$SOA) <- c("1" = "233", "2" = "467", "3" = "700")

levels(EXP1.T1.plot$data$TrialType) <- c("1" = "No Selection", "2" = "Selection")

levels(EXP1.T2.plot$data$TrialType) <- c("1" = "No Selection", "2" = "Selection")

levels(EXP1.T1.plot$data$CueType) <- c("1" = "Informative", "2" = "Uninformative")

levels(EXP1.T2.plot$data$CueType) <- c("1" = "Informative", "2" = "Uninformative")

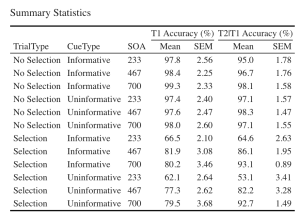

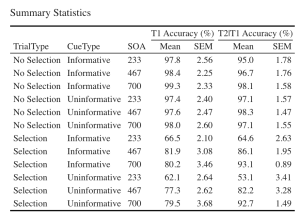

7. Let’s create a beautiful printable HTML table of the summary stats for T1 and T2 Scores. This summary table can then be used in your manuscript. I suggest that you visit the following link for guides on how to create printable tables.

- (a) Begin by extracting the summary stats data with group means and CousineauMorey SEM values from each plot function and save them as a data frame separately for T1 and T2 data (you should have two data frames with your summary stats named “EXP1.T1.summaryData” and “EXP1.T2.summaryData”)

- (b) In your two data frames with the summary stats, round your means to 1 decimal place and your SEM values to two decimal places.

- (c) Merge T1Score and T2Score summary data and save it as “EXP1_summarystat_results”

- (d) From this merged table, delete the columns with the negative SEM values (lowerwidth SEM values)

- (e) From this merged table, delete the columns with the negative SEM values (lowerwidth SEM values)

- (f) Rename the columns in this merged data frame such that the name of the columns with T1Score and T2Score means is “Means” and the columns with SEM scores for either dependent variable is “SEM”.

- (g) Caption your table as “Summary Statistics”

- (h) Set the font of your text to “Cambria” and the font size to 14

- (i) Set the headers for T1Score means and SEM columns as “T1 Accuracy (%)”.

- (j) Set the headers for T2Score means and SEM columns as “T2 Accuracy (%)”.

# Extracting summary data with CousineauMorey SEM Bars

EXP1.T1.summaryData <- data.frame(EXP1.T1.plot$data)

EXP1.T2.summaryData <- data.frame(EXP1.T2.plot$data)

# Rounding values in each column

# round(x, 1) rounds to the specified number of decimal places

EXP1.T1.summaryData$center <- round(EXP1.T1.summaryData$center,1)

EXP1.T1.summaryData$upperwidth <- round(EXP1.T1.summaryData$upperwidth,2)

EXP1.T2.summaryData$center <- round(EXP1.T2.summaryData$center,1)

EXP1.T2.summaryData$upperwidth <- round(EXP1.T2.summaryData$upperwidth,2)

# merging T1 and T2|T1 summary tables

EXP1_summarystat_results <- merge(EXP1.T1.summaryData, EXP1.T2.summaryData, by=c("TrialType","CueType","SOA"))

# Rename the column name

colnames(EXP1_summarystat_results)[colnames(EXP1_summarystat_results) == "center.x"] ="Mean"

colnames(EXP1_summarystat_results)[colnames(EXP1_summarystat_results) == "center.y"] ="Mean"

colnames(EXP1_summarystat_results)[colnames(EXP1_summarystat_results) == "upperwidth.x"] ="SEM"

colnames(EXP1_summarystat_results)[colnames(EXP1_summarystat_results) == "upperwidth.y"] ="SEM"

# deleting columns by name "lowerwidth.x" and "lowerwidth.y" in each summary table

EXP1_summarystat_results <- EXP1_summarystat_results[ , ! names(EXP1_summarystat_results) %in% c("lowerwidth.x", "lowerwidth.y")]

#removing suffixes from column names

colnames(EXP1_summarystat_results)<-gsub(".1","",colnames(EXP1_summarystat_results))

# Printable ANOVA html

EXP1_summarystat_results %>%

kbl(caption = "Summary Statistics") %>%

kable_classic(full_width = F, html_font = "Cambria", font_size = 14) %>%

add_header_above(c(" " = 3, "T1 Accuracy (%)" = 2, "T2|T1 Accuracy (%)" = 2))

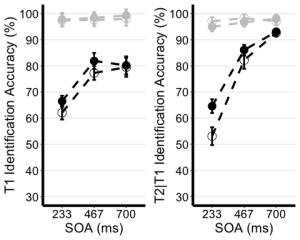

Exercise 3: Visualizing Your Data

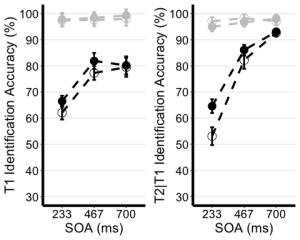

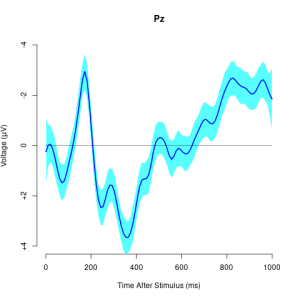

8. Use the unedited summary statistics table from Exercise 2 (EXP1.T1.summaryData and EXP1.T3.summaryData) and the ggplot() function to create separate summary line plots for T1 and T2 scores. The Line plot will visualize the relationship between SOA and the dependent measure while considering the factors of cue-type and trial-type. Your plot must have the following characteristics:

- (a) Plot SOA on the x-axis and label the x-axis as “SOA (ms)”

- (b) Plot the dependent measure on the y-axis and label the y-axis as “T1 Identification Accuracy” for T1 plot, and “T2|T1 Identification Accuracy (%)” for the T2 plot.

- (c) Define the colour of your lines by trial type.

- (d) Define the shape of the points for each value in the line by cue–type

- (e) Use the geom_point() function to customize your point shapes. Use solid circles for informative cues, and hollow circles for uninformative cues.

- (f) Use scale_color_manual() to customize line colours. Set the colour for lines plotting selection trials to ‘black’, and the lines for no-selection trials to ‘gray78’.

- (g) Use geom_line() to customize line type. Set line type to dashed and line width to 1.2.

- (h) Customize your y-axis. Set the minimum value to 30 and the maximum value to 100.

- (i) Use the scale_y_continuous() function to have the values on the y-axis increase in increments of 10.

- (j) Use the geom_errorbar() function to plot error bars using your calculated SEM values from the summary table.

- (k) Set plot theme to theme_classic()

- (l) Use the theme() function to customize the x-axis font size and line width. Change the font size of the axis main title to 16, and the x-axis labels to 14

- (m) Add horizontal grid lines.

- (n) Do not include a figure legend.

- (o) Store your two plots as “T1.ggplotplot” and “T2.ggplotplot”

EXP1.T1.ggplotplot <- ggplot(EXP1.T1.summaryData, aes(x=SOA, y=center, color=TrialType, shape = CueType,

group=interaction(CueType, TrialType))) +

geom_point(data=filter(EXP1.T1.summaryData, CueType == "Uninformative"), shape=1, size=4.5) + # assigning shape type to level of factor

geom_point(data=filter(EXP1.T1.summaryData, CueType == "Informative"), shape=16, size=4.5) + # assigning shape type to level of factor

geom_line(linetype="dashed", linewidth=1.2) + # change line thickness and line style

scale_color_manual(values = c("gray78", "black") ) +

xlab("SOA (ms)") +

ylab("T1 Identification Accuracy (%)") +

theme_classic() + # It has no background, no bounding box.

theme(axis.line=element_line(size=1.5), # We make the axes thicker...

axis.text = element_text(size = 14, colour = "black"), # their text bigger...

axis.title = element_text(size = 16, colour = "black"), # their labels bigger...

panel.grid.major.y = element_line(), # adding horizontal grid lines

legend.position = "none") +

coord_cartesian(ylim=c(30, 100)) +

scale_y_continuous(breaks=seq(30, 100, 10)) + # Ticks from 30-100, every 10

geom_errorbar(aes(ymin=center+lowerwidth, ymax=center+upperwidth), width = 0.12, size = 1) # adding error bars from summary table

EXP1.T2.ggplotplot <- ggplot(EXP1.T2.summaryData, aes(x=SOA, y=center, color=TrialType, shape=CueType,

group=interaction(CueType, TrialType))) +

geom_point(data=filter(EXP1.T2.summaryData, CueType == "Uninformative"), shape=1, size=4.5) + # assigning shape type to level of factor

geom_point(data=filter(EXP1.T2.summaryData, CueType == "Informative"), shape=16, size=4.5) + # assigning shape type to level of factor

geom_line(linetype="dashed", linewidth=1.2) + # change line thickness and line style

scale_color_manual(values = c("gray78", "black")) +

xlab("SOA (ms)") +

ylab("T2|T1 Identification Accuracy (%)") +

theme_classic() + # It has no background, no bounding box.

theme(axis.line=element_line(size=1.5), # We make the axes thicker...

axis.text = element_text(size = 14, colour = "black"), # their text bigger...

axis.title = element_text(size = 16, colour = "black"), # their labels bigger...

panel.grid.major.y = element_line(), # adding horizontal grid lines

legend.position = "none") +

guides(fill = guide_legend(override.aes = list(shape = 16) ),

shape = guide_legend(override.aes = list(fill = "black"))) +

coord_cartesian(ylim=c(30, 100)) +

scale_y_continuous(breaks=seq(30, 100, 10)) + # Ticks from 30-100, every 10

geom_errorbar(aes(ymin=center+lowerwidth, ymax=center+upperwidth), width = 0.12, size = 1) # adding error bars from summary table

9. Use ggarrange() to display your plots together.

ggarrange(EXP1.T1.ggplotplot, EXP1.T2.ggplotplot,

nrow = 1, ncol = 2, common.legend = F,

widths = 8, heights = 5)

Exercise 4: Main Analysis

10. Use the data in long format (“cueingData”) and the anova_test() function, and compute a two-way ANOVA for each dependent variable, but on selection trials only. Set up cue-type and SOA as within-participants factors. (Hint: use the filter() function)

- (a) Set your effect size measure to partial eta squared (pes)

- (b) Make sure to generate the detailed ANOVA table.

- (c) Store your computations and “T1_2anova” and “T2_2anova”.

- (d) Using get_anova_table() function display your ANOVA tables.

T1_2anova <- anova_test(

data = filter(cueingData, TRIAL_TYPE == "S"), dv = T1Score, wid = ID,

within = c(CUE_TYPE, SOA), detailed = TRUE, effect.size = "pes")

T2_2anova <- anova_test(

data = filter(cueingData, TRIAL_TYPE == "S"), dv = T2Score, wid = ID,

within = c(CUE_TYPE, SOA), detailed = TRUE, effect.size = "pes")

get_anova_table(T1_2anova)

## ANOVA Table (type III tests)

##

## Effect DFn DFd SSn SSd F p p<.05 pes

## 1 (Intercept) 1.00 15.00 534043.211 30705.523 260.886 6.80e-11 * 0.946

## 2 CUE_TYPE 1.00 15.00 253.665 506.454 7.513 1.50e-02 * 0.334

## 3 SOA 1.29 19.35 5091.853 2660.275 28.710 1.16e-05 * 0.657

## 4 CUE_TYPE:SOA 2.00 30.00 77.140 1061.051 1.091 3.49e-01 0.068

get_anova_table(T2_2anova)

## ANOVA Table (type III tests)

##

## Effect DFn DFd SSn SSd F p p<.05 pes

## 1 (Intercept) 1 15 593913.106 11288.706 789.169 2.19e-14 * 0.981

## 2 CUE_TYPE 1 15 661.761 426.947 23.250 2.24e-04 * 0.608

## 3 SOA 2 30 20001.563 3852.629 77.875 1.33e-12 * 0.838

## 4 CUE_TYPE:SOA 2 30 519.154 1298.144 5.999 6.00e-03 * 0.286

Exercise 5: Post-hoc tests

11. Filter for selection trials first, then group your data by SOA and use the pairwise_t_test() function to compare informative and uninformative trials at each level of SOA store and display your computation as “T2_sel_pwc”.

T2_sel_pwc <- filter(cueingData, TRIAL_TYPE == "S") %>%

group_by(SOA) %>%

pairwise_t_test(T2Score ~ CUE_TYPE, paired = TRUE, p.adjust.method = "holm", detailed = TRUE) %>%

add_significance("p.adj")

T2_sel_pwc <- get_anova_table(T2_sel_pwc)

T2_sel_pwc

## # A tibble: 3 × 16

## SOA estimate .y. group1 group2 n1 n2 statistic p df

## <fct> <dbl> <chr> <chr> <chr> <int> <int> <dbl> <dbl> <dbl>

## 1 233 11.5 T2Score INFORMATIVE UNINFO… 16 16 4.36 5.55e-4 15

## 2 467 3.89 T2Score INFORMATIVE UNINFO… 16 16 1.97 6.8 e-2 15

## 3 700 0.356 T2Score INFORMATIVE UNINFO… 16 16 0.190 8.52e-1 15

## # ℹ 6 more variables: conf.low <dbl>, conf.high <dbl>, method <chr>,

## # alternative <chr>, p.adj <dbl>, p.adj.signif <chr>

P02: Cognition and Memory Lab

Sevda Montakhaby Nodeh

Cognition and Memory Lab

Welcome! In this assignment, we will be entering a cognition and memory lab at McMaster University. Specifically, we will be examining data from an intriguing cognitive psychology study that explores the role of repetition in recognition memory.

Most of us are familiar with the phrase ‘practice makes perfect’. This motivational idiom aligns with intuition and is confirmed by many real-world observations. Much empirical research also supports this view–repeated opportunities to encode a stimulus improve subsequent memory retrieval and perceptual identification. These observations suggest that stimulus repetition strengthens underlying memory representations.

The present study focuses on a contradictory idea, that stimulus repetition can weaken memory encoding. The experiment comprised of three stages: a study phase, a distractor phase, and a surprise recognition memory test.

In the study phase participants aloud a red target word preceded by a briefly presented green prime word. On half of the trials, the prime and target were the same (repeated trials), and on the other half of the trials, the prime and target were different (not-repeated trials). In the figure below you can see an overview of the two different trial types. Following the study phase, participants engaged in a 10-minute distractor task consisting of math problems they had to solve by hand.

The final phase was a surprise recognition memory test where on each test trial they were shown a red word and asked to respond old if the word on the test was one they had previously seen at study, and new if they had never encountered the word before. Half of the trials at the test were words from the study phase and the other half were new words.

Let’s begin by running the following code to load the required libraries. Make sure to read through the comments embedded throughout the code to understand what each line of code is doing.

Note: Shaded boxes hold the R code, with the “#” sign indicating a comment that won’t execute in RStudio.

# Load necessary libraries

library(rstatix) #for performing basic statistical tests

library(dplyr) #for sorting data

library(readxl) #for reading excel files

library(tidyr) #for data sorting and structure

library(ggplot2) #for visualizing your data

library(plotrix) #for computing basic summary stats

Make sure to have the required dataset (“RepDecrementdataset.xlsx”) for this exercise downloaded. Set the working directory of your current R session to the folder with the downloaded dataset. You may do this manually in R studio by clicking on the “Session” tab at the top of the screen, and then clicking on “Set Working Directory”.

If the downloaded dataset file and your R session are within the same file, you may choose the option of setting your working directory to the “source file location” (the location where your current R session is saved). If they are in different folders then click on “choose directory” option and browse for the location of the downloaded dataset.

You may also do this by running the following code

Once you have set your working directory either manually or by code, in the Console below you will see the full directory of your folder as the output.

Read in the downloaded dataset as “MemoryData” and complete the accompanying exercises to the best of your abilities.

MemoryData <- read_excel('RepDecrementdataset.xlsx')

Files to Download:

- RepDecrementdataset.xlsx

An interactive H5P element has been excluded from this version of the text. You can view it online here:

https://ecampusontario.pressbooks.pub/radpnb/?p=26#h5p-2

Answer Key

Exercise 1: Data Preparation and Exploration

Note: Shaded boxes hold the R code, while the white boxes display the code’s output, just as it appears in RStudio.

The “#” sign indicates a comment that won’t execute in RStudio.

1. Display the first few rows of your dataset to familiarize yourself with its structure and contents.

head(MemoryData) #Displaying the first few rows

## # A tibble: 6 × 7

## ID Hits_NRep Hits_Rep FalseAlarms Misses_Nrep Misses_Rep CorrectRej

## <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 1 46 34 13 14 26 107

## 2 2 43 44 27 17 16 93

## 3 3 43 35 23 17 24 97

## 4 4 37 36 56 23 24 64

## 5 5 39 35 49 21 25 71

## 6 6 38 43 28 22 17 92

str(MemoryData) #Checking structure of dataset

## tibble [24 × 7] (S3: tbl_df/tbl/data.frame)

## $ ID : num [1:24] 1 2 3 4 5 6 7 8 9 10 ...

## $ Hits_NRep : num [1:24] 46 43 43 37 39 38 20 24 36 38 ...

## $ Hits_Rep : num [1:24] 34 44 35 36 35 43 11 29 43 27 ...

## $ FalseAlarms: num [1:24] 13 27 23 56 49 28 4 11 46 9 ...

## $ Misses_Nrep: num [1:24] 14 17 17 23 21 22 40 36 23 22 ...

## $ Misses_Rep : num [1:24] 26 16 24 24 25 17 49 31 17 33 ...

## $ CorrectRej : num [1:24] 107 93 97 64 71 92 116 109 74 111 ...

## [1] "ID" "Hits_NRep" "Hits_Rep" "FalseAlarms" "Misses_Nrep"

## [6] "Misses_Rep" "CorrectRej"

2. Calculate Total Trials for Each Condition:

- (a) For each participant, sum the number of hits for non-repeated trials and missed non-repeated trials. Store this total in a new column named “TotalNRep”. The value should be 60 for all participants, reflecting the total number of non-repeated trial types.

- (b) Repeat the process for repeated trials, storing the sum in “TotalRep” (60 trials).

- (c) Similarly, sum the number of false alarms and correct rejections to represent the total number of new trials (120 trials) and store this in “TotalNew”.

Note that if the value in “TotalNRep” and “TotalRep” is less than 60 for a participant, it indicates that certain word trials were excluded during the study phase due to issues (e.g., the participant read aloud the prime word instead of the target, leading to trial spoilage).

MemoryData <- MemoryData %>%

mutate(TotalNRep = Hits_NRep + Misses_Nrep)

MemoryData <- MemoryData %>%

mutate(TotalRep = Hits_Rep + Misses_Rep)

MemoryData <- MemoryData %>%

mutate(TotalNew = FalseAlarms + CorrectRej)

3. Transform the counts in the hits, misses, false alarms, and correct rejections columns into proportions. Do this by dividing each count by the total number of trials for the respective condition (e.g., divide hits for non-repeated trials by “TotalNRep”).

MemoryData$Hits_NRep <- (MemoryData$Hits_NRep/MemoryData$TotalNRep)

MemoryData$Misses_Nrep <- (MemoryData$Misses_Nrep/MemoryData$TotalNRep)

MemoryData$Hits_Rep <- (MemoryData$Hits_Rep/MemoryData$TotalRep)

MemoryData$Misses_Rep <- (MemoryData$Misses_Rep/MemoryData$TotalRep)

MemoryData$CorrectRej <- (MemoryData$CorrectRej/MemoryData$TotalNew)

MemoryData$FalseAlarms <- (MemoryData$FalseAlarms/MemoryData$TotalNew)

4. Once the proportions are calculated, remove the “TotalNew”, “TotalRep”, and “TotalNRep” columns from the dataset as they are no longer needed for further analysis.

MemoryData <- MemoryData[, !names(MemoryData) %in% c("TotalNew", "TotalRep","TotalNRep")]

5. Use the pivot_longer() function from the tidyr package to convert your data from wide to long format. Pivot the columns “Hits_NRep”, “Hits_Rep”, and “FalseAlarms”, setting the new column names to “Condition” and “Proportion” for the reshaped data.

long_df <- MemoryData %>%

pivot_longer(

cols = c(Hits_NRep, Hits_Rep, FalseAlarms),

names_to = "Condition",

values_to = "Proportion"

)

Exercise 2: Computing Summary Stats and Correcting for Within-Subjects Variability

6. Using your long formatted dataset, group your data by ID and calculate the per-subject mean and the grand mean of the Proportions column.

- (a) Adjust each individual’s score by subtracting their mean and adding the grand mean.

- (b) Calculate the mean and SEM of the adjusted scores for each condition.

- (c) Use the adjusted scores to calculate the within-subject SEM. d.Group the data by Condition and calculate the mean and SEM.

data_adjusted <- long_df %>%

group_by(ID) %>%

mutate(SubjectMean = mean(Proportion, na.rm = TRUE)) %>%

ungroup() %>%

mutate(GrandMean = mean(Proportion, na.rm = TRUE)) %>%

mutate(AdjustedScore = Proportion - SubjectMean + GrandMean)

# Calculate the mean and SEM of the adjusted scores

summary_df <- data_adjusted %>%

group_by(Condition) %>%

summarize(

AdjustedMean = mean(AdjustedScore, na.rm = TRUE),

AdjustedSEM = sd(AdjustedScore, na.rm = TRUE) / sqrt(n())

)

Exercise 3: Visualizing your data

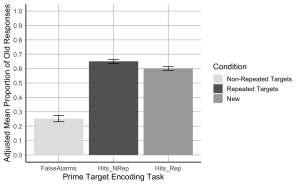

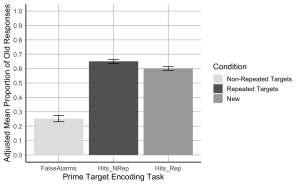

7. Create a bar plot where the x-axis represents the Prime-target Encoding task conditions, the y-axis shows the adjusted mean proportion of old responses, and include error bars represent the adjusted SEM. Begin by setting custom colours for each condition. The colour for the bar presenting the false alarms or “New” should be “gray89”; the colour for “Hits_Nrep” or “Non-Repeated Targets” bar should be “gray39”; the colour for the “Hits_Rep” or “Repeated Targets” bar should be “darkgrey”.

- (a) The x-axis should be titled “Prime Target Encoding Task”.

- (b) The y-axis should be titled “Adjusted Mean Proportion of Old Responses”

- (c) Add error bars to each bar to represent the corrected SEM.

- (d) Make the x and y-axis lines solid black.

- (e) Ensure the plot has a minimalistic design with major grid lines only.

- (f) Add a legend to indicate the Condition categories, the legend should read, Non-Repeated Targets instead of “Hits_Nrep”, Repeated Targets instead of “Hits_Rep”, and New instead of “False Alarms”.

- (g) Set the minimum and maximum values on your y-axis to 0 and 1, respectively.

- (h)The values on the y-axis should go up by 0.1

# Create the bar plot with adjusted SEM error bars

ggplot(summary_df, aes(x = Condition, y = AdjustedMean, fill = Condition)) +

geom_bar(stat = "identity", position = position_dodge()) +

geom_errorbar(aes(ymin = AdjustedMean - AdjustedSEM, ymax = AdjustedMean + AdjustedSEM), width = 0.2, position = position_dodge(0.9)) +

scale_fill_manual(values = c("Hits_NRep" = "gray39", "Hits_Rep" = "darkgrey", "FalseAlarms" = "gray89"),

labels = c("Non-Repeated Targets", "Repeated Targets", "New")) +

labs(

x = "Prime Target Encoding Task",

y = "Adjusted Mean Proportion of Old Responses",

fill = "Condition"

) +

scale_y_continuous(breaks = seq(0, 1, by = 0.1), limits = c(0, 1)) +

theme_minimal(base_size = 14) +

theme(

axis.line = element_line(color = "black"),

axis.title = element_text(color = "black"),

panel.grid.major = element_line(color = "grey", size = 0.5),

panel.grid.minor = element_blank(),

legend.title = element_text(color = "black")

)

Exercise 4: Computation

8. Using the wide formatted data file “MemoryData” conduct a two-paired sample t-test comparing the hit rate collapsed across the two repetition conditions (repeated/not-repeated) to the false alarm rate to assess participants’ ability to distinguish old from new items.

- (a) Calculate the mean hit rate by averaging the hit rates from the ‘Hits_NRep’ (non-repeated) and ‘Hits_Rep’ (repeated) conditions for each participant.

- (b) Conduct a paired sample t-test to compare the hit rate (collapsed across the two repetition conditions) to the false alarm rate to assess participants’ ability to distinguish old from new items.

collapsed_hitdata <- MemoryData %>%

mutate(HitRate = (Hits_NRep + Hits_Rep) / 2)

# Conduct paired sample t-tests

t_test_results <- t.test(collapsed_hitdata$HitRate, collapsed_hitdata$FalseAlarms, paired = TRUE)

print(t_test_results)

##

## Paired t-test

##

## data: collapsed_hitdata$HitRate and collapsed_hitdata$FalseAlarms

## t = 11.621, df = 23, p-value = 4.179e-11

## alternative hypothesis: true mean difference is not equal to 0

## 95 percent confidence interval:

## 0.3071651 0.4401983

## sample estimates:

## mean difference

## 0.3736817

#Hit rates were higher than false alarm rates, t(23) = 11.62, p < .001.

9. Using the wide formatted data file “MemoryData” conduct a two-paired sample t-test comparing the hit rates for the not-repeated and repeated targets.

# Conduct paired sample t-tests for non-repeated vs repeated hit rates

t_test_results_hits <- t.test(collapsed_hitdata$Hits_NRep, collapsed_hitdata$Hits_Rep, paired = TRUE)

# Print the results for the hit rate comparison

print(t_test_results_hits)

##

## Paired t-test

##

## data: collapsed_hitdata$Hits_NRep and collapsed_hitdata$Hits_Rep

## t = 2.5431, df = 23, p-value = 0.01817

## alternative hypothesis: true mean difference is not equal to 0

## 95 percent confidence interval:

## 0.009071364 0.088174399

## sample estimates:

## mean difference

## 0.04862288

#Hit rates were higher for not-repeated targets than for repeated targets, t(23) = 2.54, p = .018.

P03: Early Childhood Development Lab

Sevda Montakhaby Nodeh

Early Childhood Development Lab

You are a researcher at the Early Childhood Development Research Center. Your latest project investigates how infants respond to different combinations of face race and music emotion. In specific you are interested in whether infants associate own- and other-race faces with music of different emotional valences (happy and sad music).

Your project was completed in collaboration with your colleagues in China. While you were responsible for designing your experiment, your collaborators were responsible for recruiting participants and collecting your data.

Chinese infants (3 to 9 months old) were recruited to participate in your experiment. Each infant was randomly assigned to one of the four face-race + music conditions where they saw a series of neutral own- or other-race faces paired with happy or sad musical excerpts.

- Own-race + happy-music condition (own-happy)

- Own-race + sad-music (own-sad)

- Other-race + happy music (other-happy)

- Other-race + sad music (other-sad)

In the own-happy, infants watched six Asian face videos sequentially paired with six happy musical excerpts. In other-sad, infants watched six African face videos sequentially paired with happy musical excerpts. In general, conditions were procedurally the same, except for the face-music composition. Infant eye movements were recorded using an eye tracker.

Your goal is to determine how face race and music emotion, as well as their interaction, influence the looking behaviour of infants.

Your independent variables:

- Face.Race(Chinese/African)

- Music.Emotion(Happy/Sad)

Your dependent variables:

- First.Face.Looking.Time: this is the looking time on the first face video in all four conditions

- Total.Looking.Time: Summ of each infant’s looking times to the subsequent five faces to create a measure of their total looking time to the five faces after.

Let’s begin by loading the required libraries and the dataset as “BabyData”. To do so download the file “infant_eye_tracking_study.csv” and run the following code. Remember to replace ‘path_to_your_downloaded_file’ with the actual path to the dataset on your system.

Note: Shaded boxes hold the R code, with the “#” sign indicating a comment that won’t execute in RStudio.

BabyData <- read.csv('path_to_your_downloaded_file/infant_eye_tracking_study.csv')

library(rstatix) #for performing basic statistical tests

library(dplyr) #for sorting data

library(tidyr) #for data sorting and structure

library(ggplot2) #for visualizing your data

library(readr)

library(ggpubr)

library(gridExtra)

Files to Download:

- infant_eye_tracking_study.csv

Please complete the accompanying exercises to the best of your abilities.

An interactive H5P element has been excluded from this version of the text. You can view it online here:

https://ecampusontario.pressbooks.pub/radpnb/?p=30#h5p-3

Answer Key

Exercise 1: Data Preparation and Exploration

Note: Shaded boxes hold the R code, while the white boxes display the code’s output, just as it appears in RStudio.

The “#” sign indicates a comment that won’t execute in RStudio.

1. Display the first few rows to understand your dataset.

summary(BabyData) # Viewing the summary of the dataset to check for inconsistencies

## Age.in.Days Condition Face.Race Music.Emotion Age.Group

## 1 93 Other-Race Happy Music African happy 3

## 2 98 Other-Race Happy Music African happy 3

## 3 93 Other-Race Happy Music African happy 3

## 4 93 Other-Race Happy Music African happy 3

## 5 93 Other-Race Happy Music African happy 3

## 6 100 Other-Race Happy Music African happy 3

## Total.Looking.Time First.Face.Looking.Time Participant.ID

## 1 44.035 8.273 HJOGM7704U

## 2 18.324 6.938 JHSEG5414N

## 3 24.600 4.225 OCQFX4970K

## 4 12.919 7.537 KLDOF5559R

## 5 12.755 4.230 HHPGJ9661Y

## 6 38.777 9.351 NVCPX9518V

2. Use relocate() to re-order your columns such that your “Participant.ID” column appears as the first column in your dataset.

BabyData <- BabyData %>% relocate(Participant.ID, .before = Age.in.Days)

3. Check your data for any missing values. Remove any rows with missing or NA values from the dataset.

sum(is.na(BabyData)) # Checking for missing values in the dataset

BabyData <- BabyData[!is.na(BabyData$First.Face.Looking.Time), ]

## Participant.ID Age.in.Days Condition Face.Race

## Length:193 Min. : 79.0 Length:193 Length:193

## Class :character 1st Qu.:127.0 Class :character Class :character

## Mode :character Median :185.0 Mode :character Mode :character

## Mean :189.3

## 3rd Qu.:246.0

## Max. :316.0

##

## Music.Emotion Age.Group Total.Looking.Time First.Face.Looking.Time

## Length:193 Min. :3.000 Min. : 1.654 Min. : 0.160

## Class :character 1st Qu.:3.000 1st Qu.:20.671 1st Qu.: 5.309

## Mode :character Median :6.000 Median :30.381 Median : 7.495

## Mean :6.093 Mean :29.196 Mean : 7.041

## 3rd Qu.:9.000 3rd Qu.:38.196 3rd Qu.: 9.185

## Max. :9.000 Max. :50.000 Max. :11.823

## NA's :3

4. Check your data again for any missing values and check data consistency.

sum(is.na(BabyData)) # Checking for missing values in the dataset

summary(BabyData) # Viewing the summary of the dataset to check for inconsistencies

## Participant.ID Age.in.Days Condition Face.Race

## Length:193 Min. : 79.0 Length:193 Length:193

## Class :character 1st Qu.:127.0 Class :character Class :character

## Mode :character Median :185.0 Mode :character Mode :character

## Mean :189.3

## 3rd Qu.:246.0

## Max. :316.0

##

## Music.Emotion Age.Group Total.Looking.Time First.Face.Looking.Time

## Length:193 Min. :3.000 Min. : 1.654 Min. : 0.160

## Class :character 1st Qu.:3.000 1st Qu.:20.671 1st Qu.: 5.309

## Mode :character Median :6.000 Median :30.381 Median : 7.495

## Mean :6.093 Mean :29.196 Mean : 7.041

## 3rd Qu.:9.000 3rd Qu.:38.196 3rd Qu.: 9.185

## Max. :9.000 Max. :50.000 Max. :11.823

## NA's :0

5. Check for structure and ensure that your factor columns (Music.Emotion, Face.Race, and Condition) are set-up correctly.

## 'data.frame': 190 obs. of 8 variables:

## $ Participant.ID : chr "HJOGM7704U" "JHSEG5414N" "OCQFX4970K" "KLDOF5559R" ...

## $ Age.in.Days : int 93 98 93 93 93 100 93 91 98 100 ...

## $ Condition : chr "Other-Race Happy Music" "Other-Race Happy Music" "Other-Race Happy Music" "Other-Race Happy Music" ...

## $ Face.Race : chr "African" "African" "African" "African" ...

## $ Music.Emotion : chr "happy" "happy" "happy" "happy" ...

## $ Age.Group : int 3 3 3 3 3 3 3 3 3 3 ...

## $ Total.Looking.Time : num 44 18.3 24.6 12.9 12.8 ...

## $ First.Face.Looking.Time: num 8.27 6.94 4.22 7.54 4.23 ...

BabyData$Face.Race <- as.factor(BabyData$Face.Race)

BabyData$Music.Emotion <- as.factor(BabyData$Music.Emotion)

BabyData$Condition <- as.factor(BabyData$Condition)

6. Check to see if your design is balanced or unbalanced.

table(BabyData$Age.Group, BabyData$Condition) #unbalanced design

##

## Other-Race Happy Music Other-Race Sad Music Own-Race Happy Music

## 3 16 12 12

## 6 15 19 19

## 9 14 17 17

##

## Own-Race Sad Music

## 3 17

## 6 15

## 9 17

Exercise 2: Conducting a Multi-Variable Linear Regression Analysis

7. Conduct a multi-variable linear regression on the first face looking time as the predicted variable, with Group, face race, and their interactions as the predictors. Display the result.

lm_model1 <- lm(First.Face.Looking.Time ~ Age.Group*Face.Race, data = BabyData)

summary(lm_model1)

##

## Call:

## lm(formula = First.Face.Looking.Time ~ Age.Group * Face.Race,

## data = BabyData)

##

## Residuals:

## Min 1Q Median 3Q Max

## -7.4524 -1.4478 0.3645 2.0507 4.5670

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 5.50710 0.75573 7.287 8.75e-12 ***

## Age.Group 0.22815 0.11542 1.977 0.0496 *

## Face.RaceChinese -0.04233 1.05722 -0.040 0.9681

## Age.Group:Face.RaceChinese 0.05036 0.16071 0.313 0.7544

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 2.658 on 186 degrees of freedom

## Multiple R-squared: 0.05411, Adjusted R-squared: 0.03885

## F-statistic: 3.546 on 3 and 186 DF, p-value: 0.01564

8. Conduct a multivariable linear regression similar to the one described in the previous question. You predicted variable should be Total looking time, with Age.Group, Face.Race, Musical.Emotion, and their interactions as the predictors.

lm_model2 <- model <- lm(Total.Looking.Time ~ Age.Group * Face.Race * Music.Emotion, data = BabyData)

summary(lm_model2)

##

## Call:

## lm(formula = Total.Looking.Time ~ Age.Group * Face.Race * Music.Emotion,

## data = BabyData)

##

## Residuals:

## Min 1Q Median 3Q Max

## -24.8431 -8.0316 -0.1786 8.2809 27.7472

##

## Coefficients:

## Estimate Std. Error t value

## (Intercept) 28.0472 4.5406 6.177

## Age.Group -0.5167 0.7144 -0.723

## Face.RaceChinese -11.5424 6.6960 -1.724

## Music.Emotionsad -15.2955 6.6960 -2.284

## Age.Group:Face.RaceChinese 3.0376 1.0229 2.970

## Age.Group:Music.Emotionsad 3.4057 1.0229 3.330

## Face.RaceChinese:Music.Emotionsad 26.8342 9.3820 2.860

## Age.Group:Face.RaceChinese:Music.Emotionsad -5.7421 1.4252 -4.029

## Pr(>|t|)

## (Intercept) 4.16e-09 ***

## Age.Group 0.47045

## Face.RaceChinese 0.08645 .

## Music.Emotionsad 0.02351 *

## Age.Group:Face.RaceChinese 0.00338 **

## Age.Group:Music.Emotionsad 0.00105 **

## Face.RaceChinese:Music.Emotionsad 0.00473 **

## Age.Group:Face.RaceChinese:Music.Emotionsad 8.22e-05 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 11.72 on 182 degrees of freedom

## Multiple R-squared: 0.1742, Adjusted R-squared: 0.1425

## F-statistic: 5.486 on 7 and 182 DF, p-value: 9.76e-06

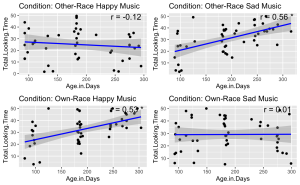

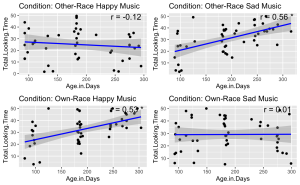

9. Given the significant three-way interaction, conduct Pearson correlation analyses to examine the linear relationship between total face looking time and participant age in days in each condition.

- (a) Begin by identifying all the unique conditions present in the dataset.

- (b) Performs a Pearson correlation analysis between Age group and Total Looking Time for each unique condition.

- (c) Stores and prints the correlation results, including correlation coefficients and p-values, for each condition.

unique_conditions <- unique(BabyData$Condition) #Get unique conditions

correlation_results <- list() ## Initialize a list to store results

# Loop through each condition and perform Pearson correlation

for (condition in unique_conditions) {

# Subset data for the current condition

subset_data <- subset(BabyData, Condition == condition)

subset_data$Age.Group <- as.numeric(as.character(subset_data$Age.Group))

# Perform Pearson correlation

correlation_test <- cor.test(subset_data$Age.Group, subset_data$Total.Looking.Time, method = "pearson")

# Store the result

correlation_results[[condition]] <- correlation_test

}

# Print the results

correlation_results

## $`Other-Race Happy Music`

##

## Pearson's product-moment correlation

##

## data: subset_data$Age.Group and subset_data$Total.Looking.Time

## t = -0.64059, df = 43, p-value = 0.5252

## alternative hypothesis: true correlation is not equal to 0

## 95 percent confidence interval:

## -0.3799180 0.2020743

## sample estimates:

## cor

## -0.09722666

##

##

## $`Other-Race Sad Music`

##

## Pearson's product-moment correlation

##

## data: subset_data$Age.Group and subset_data$Total.Looking.Time

## t = 4.4535, df = 46, p-value = 5.356e-05

## alternative hypothesis: true correlation is not equal to 0

## 95 percent confidence interval:

## 0.3136678 0.7206311

## sample estimates:

## cor

## 0.5488839

##

##

## $`Own-Race Happy Music`

##

## Pearson's product-moment correlation

##

## data: subset_data$Age.Group and subset_data$Total.Looking.Time

## t = 3.8943, df = 46, p-value = 0.0003166

## alternative hypothesis: true correlation is not equal to 0

## 95 percent confidence interval:

## 0.2490419 0.6851408

## sample estimates:

## cor

## 0.4979416

##

##

## $`Own-Race Sad Music`

##

## Pearson's product-moment correlation

##

## data: subset_data$Age.Group and subset_data$Total.Looking.Time

## t = 0.25438, df = 47, p-value = 0.8003

## alternative hypothesis: true correlation is not equal to 0

## 95 percent confidence interval:

## -0.2466891 0.3149919

## sample estimates:

## cor

## 0.03707966

Exercise 3: Visualizing Your Data

10. Visualize the relationship between total face looking time and participant age in days, categorized by different experimental conditions. Each condition should be represented in its own panel within a single figure. Additionally, for each panel:

- (a) Plot each infant’s total face looking time as a function of their age in days.

- (b) Add a blue linear regression line to indicate the trend.

- (c) Display the Pearson correlation coefficient you calculated in the previous question in the upper-right corner of each panel. Round your calculations for display to two decimal places.

- (d) Use different panels for each experimental condition and arrange them in a grid layout.

- (e) Ensure that a significant correlation (p < .05) is indicated with an asterisk.

# Get unique conditions

conditions <- unique(BabyData$Condition)

# Create a list to store plots

plot_list <- list()

# Loop through each condition and create a plot

for (condition in conditions) {

# Subset data for the condition

subset_data <- subset(BabyData, Condition == condition)

# Perform linear regression

fit <- lm(Total.Looking.Time ~ Age.in.Days, data = subset_data)

# Calculate Pearson correlation

cor_test <- cor.test(subset_data$Age.in.Days, subset_data$Total.Looking.Time)

# Create a scatter plot with regression line

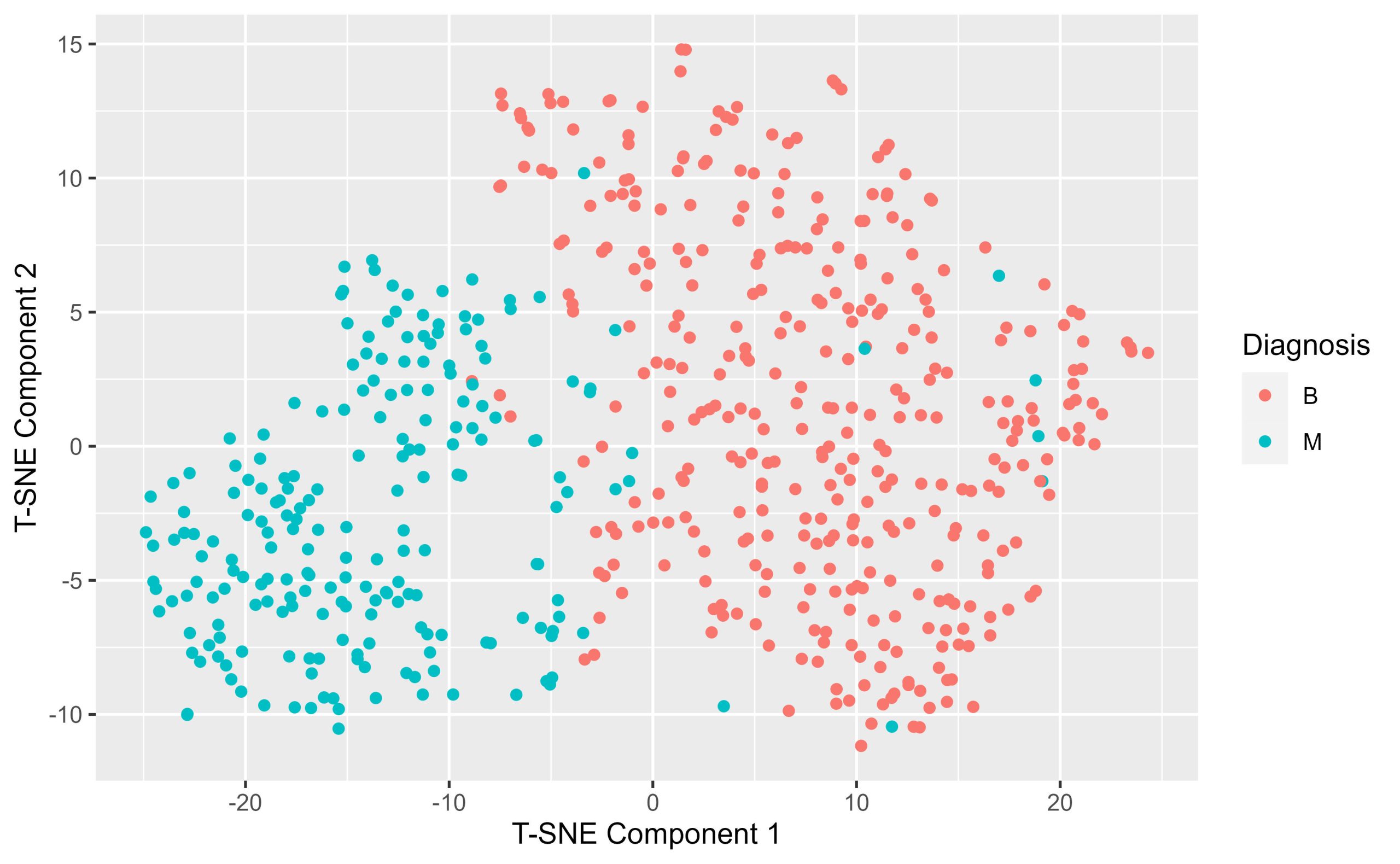

p <- ggplot(subset_data, aes(x = Age.in.Days, y = Total.Looking.Time)) +

geom_point() +

geom_smooth(method = 'lm', color = 'blue') +

ggtitle(paste('Condition:', condition)) +

annotate("text", x = Inf, y = Inf, label = paste('r =', round(cor_test$estimate, 2), ifelse(cor_test$p.value < 0.05, "*", "")),

hjust = 1.1, vjust = 1.1, size = 5)

# Add plot to list

plot_list[[condition]] <- p

}

do.call(grid.arrange, c(plot_list, ncol = 2))

Exercise 4: Conducting Independent Sample T-tests

11. Analyze the impact of music emotional valence on the looking time for own- and other-race faces among different infant age groups (3, 6, and 9 months). Specifically, you are required to perform a series of independent sample t-tests.

- (a) Using the Age.Group column, conduct independent sample t-tests to examine the effects of music emotional valence (Music. Emotion) on the looking time (Total.Looking.Time) for own- and other-race faces (Face.Race) in each age group.

- (b) Ensure your script accounts for different combinations of age groups and music emotional valences.

- (c) Store and display the results of these t-tests in an organized manner.

# Ensure Age.Group is treated as a factor

BabyData$Age.Group <- as.factor(BabyData$Age.Group)

# Perform t-tests for each combination of Age.Group, Music.Emotion, and Face.Race

results <- list()

for(age_group in levels(BabyData$Age.Group)) {

for(music_emotion in unique(BabyData$Music.Emotion)) {

# Filter data for specific age group and music emotion

subset_data <- BabyData %>%

filter(Age.Group == age_group, Music.Emotion == music_emotion)

# Perform the t-test comparing Total.Looking.Time for own- vs. other-race faces

t_test_result <- t.test(Total.Looking.Time ~ Face.Race, data = subset_data)

# Store the results

result_name <- paste(age_group, music_emotion, sep="_")

results[[result_name]] <- t_test_result

}

}

# Print results

print(results)

## $`3_happy`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = -0.3153, df = 22.294, p-value = 0.7555

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## -12.465591 9.173257

## sample estimates:

## mean in group African mean in group Chinese

## 22.76875 24.41492

##

##

## $`3_sad`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = -1.0492, df = 22.86, p-value = 0.3051

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## -17.297369 5.658457

## sample estimates:

## mean in group African mean in group Chinese

## 21.32725 27.14671

##

##

## $`6_happy`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = 0.43226, df = 27.324, p-value = 0.6689

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## -6.401791 9.821475

## sample estimates:

## mean in group African mean in group Chinese

## 32.90100 31.19116

##

##

## $`6_sad`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = -0.62019, df = 27.075, p-value = 0.5403

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## -9.635393 5.162123

## sample estimates:

## mean in group African mean in group Chinese

## 30.20163 32.43827

##

##

## $`9_happy`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = -6.0414, df = 21.29, p-value = 5.08e-06

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## -27.28467 -13.31931

## sample estimates:

## mean in group African mean in group Chinese

## 19.13607 39.43806

##

##

## $`9_sad`

##

## Welch Two Sample t-test

##

## data: Total.Looking.Time by Face.Race

## t = 3.0179, df = 26.642, p-value = 0.005546

## alternative hypothesis: true difference in means between group African and group Chinese is not equal to 0

## 95 percent confidence interval:

## 3.335708 17.533234

## sample estimates:

## mean in group African mean in group Chinese

## 38.68853 28.25406

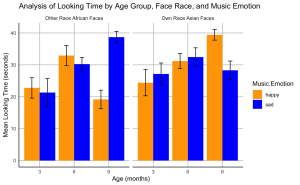

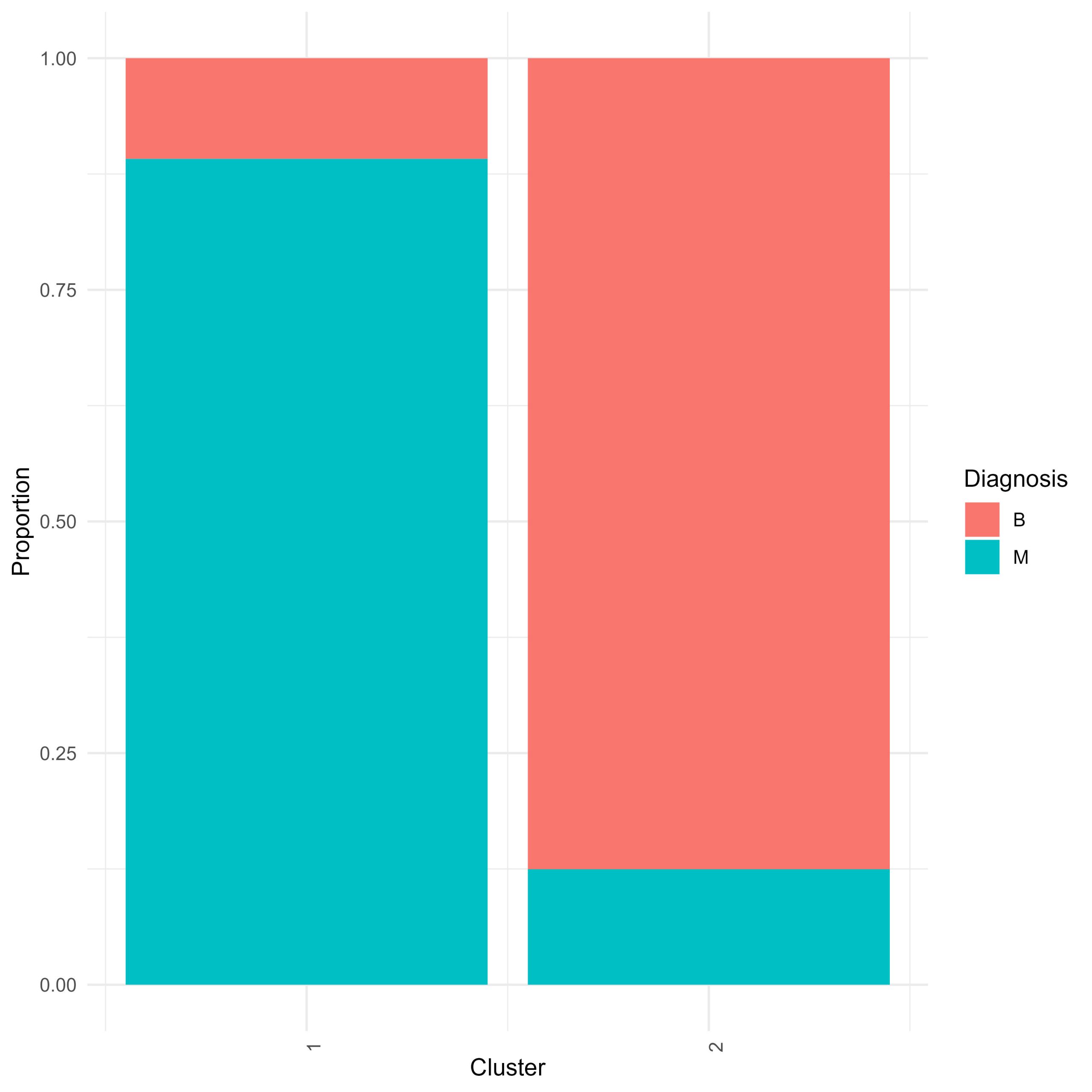

Exercise 5 Creating a Bar Plots

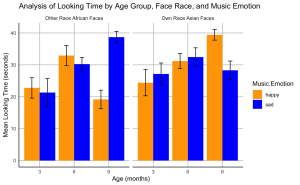

12. Create a bar plot to visualize the effects of music emotional valence on the looking time of infants at different ages for own- and other-race faces.

- (a) The plot should display the mean total looking time on the own- and other-race faces paired with happy or sad music for each age group.

- (b) Include standard error bars in your plot.

- (c) Organize the bars such that bars representing own-race faces are grouped together and labelled “Own Race Asian Faces”, followed by bars for other-race faces grouped together and labelled “Other Race African Faces”.

- (d) The colour of the bars should represent the music emotion: use blue for sad music and orange for happy music.

- (e) Label the x-axis as “Age (months)” and the y-axis as “Mean Looking Time (seconds)”.

- (f) Set the title of your plot as “Analysis of Looking Time by Age Group, Face Race, and Music Emotion”

- (g) Set the theme of your plot to minimal. Make sure the x- and y-axis lines are solid black lines.

- (h)Your plot should not display minor grid lines, major grid lines only.

# Calculate means and standard errors

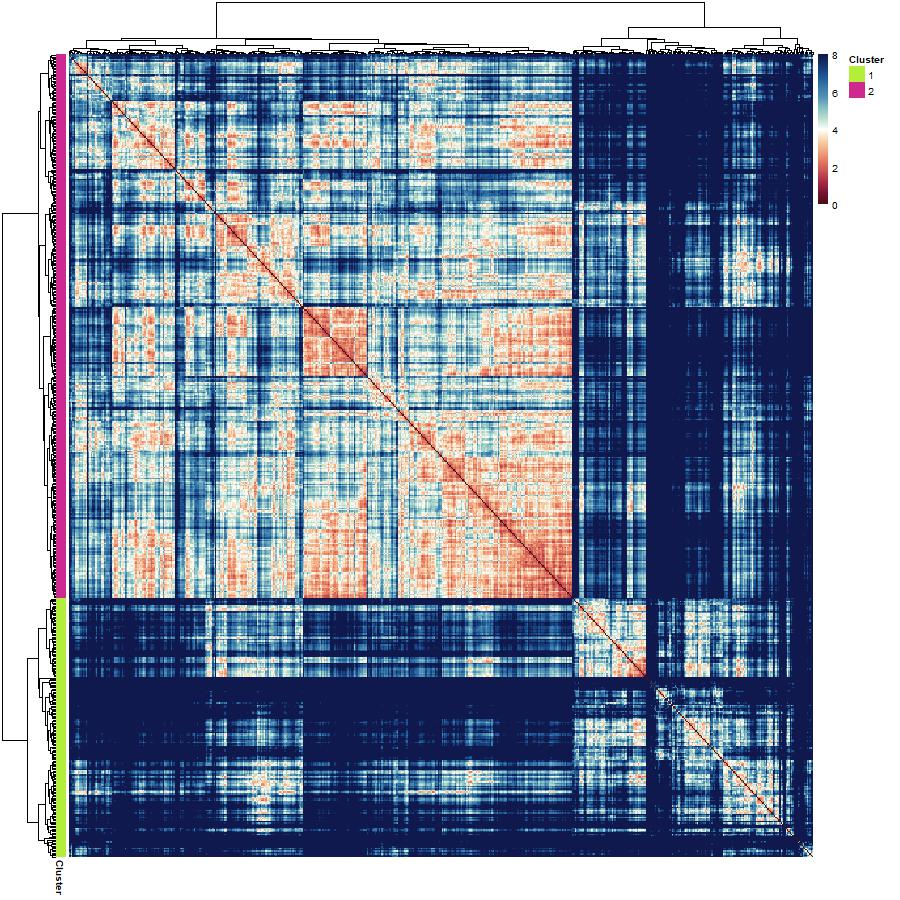

data_summary <- BabyData %>%

group_by(Age.Group, Face.Race, Music.Emotion) %>%

summarize(Mean = mean(Total.Looking.Time),

SE = sd(Total.Looking.Time)/sqrt(n())) %>%

ungroup()

## `summarise()` has grouped output by 'Age.Group', 'Face.Race'. You can override

## using the `.groups` argument.

# Create the bar plot

ggplot(data_summary, aes(x = factor(Age.Group), y = Mean, fill = Music.Emotion)) +

geom_bar(stat = "identity", position = position_dodge()) +

geom_errorbar(aes(ymin = Mean - SE, ymax = Mean + SE),

position = position_dodge(0.9), width = 0.25) +

scale_fill_manual(values = c("happy" = "orange", "sad" = "blue")) +

facet_wrap(~ Face.Race, scales = "free_x", labeller = labeller(Face.Race = c(Chinese = "Own Race Asian Faces", African = "Other Race African Faces"))) +

labs(x = "Age (months)", y = "Mean Looking Time (seconds)", title = "Analysis of Looking Time by Age Group, Face Race, and Music Emotion") +

theme_minimal() +

theme(

panel.grid.minor = element_blank(),

panel.grid.major = element_line(color = "gray", size = 0.5, linetype = "solid"), # Major grid lines

axis.line = element_line(color = "black", size = 0.5) # Axis lines

)

P04: Perception and Sensorimotor Lab

Sevda Montakhaby Nodeh

Perception and Sensorimotor Lab

Welcome to the Perception and Sensorimotor Lab at McMaster University. As a budding cognitive psychologist here, you are about to embark on an explorative journey into the depth effect—a captivating psychological phenomenon that suggests visual events occurring in closer proximity (near space) are processed more efficiently than those farther away (far space). This effect provides a unique window into the cognitive architecture underpinning our sensory experiences, possibly implicating the involvement of the dorsal visual stream, which processes spatial relationships and movements in near space, and the ventral stream, known for its role in recognizing detailed visual information.

Your goal is to dissect whether the depth effect is task-dependent, aligning strictly with the dorsal/ventral stream dichotomy, or whether it represents a universal processing advantage for stimuli in near space across various cognitive tasks.

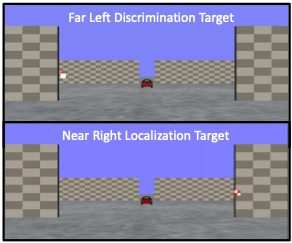

Your research journey begins in your lab. Imagine the lab as a gateway to a three-dimensional world, where the concept of depth is not only a subject of study but also a lived sensory experience for your participants! Seated inside a darkened tent, each participant grips a steering wheel, their primary tool for interaction and inputting responses. Before them, a screen comes to life with a 3D virtual environment meticulously engineered to test the frontiers of depth perception.

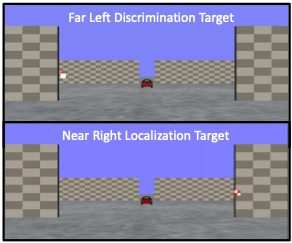

The virtual landscape participants encounter is a model of simplicity and complexity; as illustrated in the figure below, before the participants a ground plane extends into the depth of the screen, intersected by two sets of upright placeholder walls at varying depths—near and far. The walls stand on either side of the central axis, mirrored perfectly across the midline. The textures of the ground and placeholders—a random dot matrix and a checkerboard pattern, respectively—maintain a consistent density. These visual hints, alongside the textural gradients and the retinal size variance between near and far objects, act as subtle cues for depth perception.

From their first-person point of view, participants are asked to:

- Either discriminate the orientation of a red triangular target or localize a checkered square within this 3D dimensional immersive environment.

- The targets could appear in both near and far spaces, demanding keen sensory discrimination and localization.

Through this experiment, you are not just observing the depth effect; you are dissecting it, unearthing the cognitive processes that allow humans to navigate the intricate dance of depth in our daily lives!

Let’s begin by loading the required libraries and the dataset. To do so download the file “NearFarRep_Outlier.csv” and run the following code.

Note: Shaded boxes hold the R code, with the “#” sign indicating a comment that won’t execute in RStudio.

# Loading the required

libraries library(tidyverse) # for data manipulation

library(rstatix) # for statistical analyses

library(emmeans) # for pairwise comparisons

library(afex) # for running anova using aov_ez and aov_car

library(kableExtra) # formatting html ANOVA tables

library(ggpubr) # for making plots

library(grid) # for plots

library(gridExtra) # for arranging multiple ggplots for extraction

library(lsmeans) # for pairwise comparisons

Read in the downloaded dataset “NearFarRep_Outlier.csv” as “NearFarData”. Remember to replace ‘path_to_your_downloaded_file’ with the actual path to the dataset on your system.

NearFarData <- read.csv('path_to_your_downloaded_file/NearFarRep_Outlier.csv')

The dataset contains the response times of participants and includes the following columns:

- “Response” indicates the type of task (Discrimination or Localization)

- “Con” indicating the target depth (Near or Far)

- “TarRT” represents the target response times.

Files to Download:

- NearFarRep_Outlier.csv

Please complete the accompanying exercises to the best of your abilities.

An interactive H5P element has been excluded from this version of the text. You can view it online here:

https://ecampusontario.pressbooks.pub/radpnb/?p=34#h5p-4

Answer Key

Exercise 1: Data Preparation and Exploration

Note: Shaded boxes hold the R code, while the white boxes display the code’s output, just as it appears in RStudio.

The “#” sign indicates a comment that won’t execute in RStudio.

1. Display the first few rows to understand your dataset. Display all column names in the dataset.

head(NearFarData) #Displaying the first few rows

## X ID Response Con TarRT

## 1 1 10 Loc Near 0.6200754

## 2 2 10 Loc Near 0.2219719

## 3 3 1 Loc Near 0.2270377

## 4 4 9 Loc Near 0.5270686

## 5 5 25 Loc Near 0.2272455

## 6 6 18 Loc Near 0.2292785

## [1] "X" "ID" "Response" "Con" "TarRT"

2. Set up “Response” and “Con” as factors, then check the structure of your data to make sure your factors and levels are set up correctly.

NearFarData <- NearFarData %>%

convert_as_factor(Response, Con)

str(NearFarData)

## 'data.frame': 11154 obs. of 5 variables:

## $ X : int 1 2 3 4 5 6 7 8 9 10 ...

## $ ID : int 10 10 1 9 25 18 4 9 8 18 ...

## $ Response: Factor w/ 2 levels "Disc","Loc": 2 2 2 2 2 2 2 2 2 2 ...

## $ Con : Factor w/ 2 levels "Far","Near": 2 2 2 2 2 2 2 2 2 2 ...

## $ TarRT : num 0.62 0.222 0.227 0.527 0.227 ...

3. Perform basic data checks for missing values and data consistency.

sum(is.na(NearFarData)) # Checking for missing values in the dataset

4. Convert the values in your dependent measures column “TarRT” to seconds.

NearFarData$TarRT <- NearFarData$TarRT * 1000

Exercise 2: Visualizing Your Data

- Using the “dplyr” package, write R code to calculate the mean response time and the standard error of the mean (SERT) for each combination of your two factors (Response and Con).

# Calculate means and standard errors for each combination of 'Response' and 'Con'

summary_df <- NearFarData %>%

group_by(Response, Con) %>%

summarise(

MeanRT = mean(TarRT),

SERT = sd(TarRT) / sqrt(n())

)

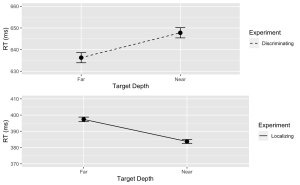

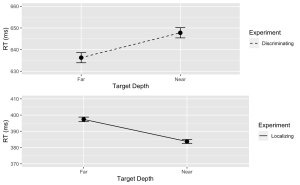

6. Using the “ggplot2” package, create a line plot with error bars for the Discrimination task.

- (a) The x-axis should represent the target depth (Con), and be labelled “Target Depth”.

- (b) The y-axis should represent the mean response time (MeanRT), and be labelled “RT (ms)”

- (c) Error bars should represent the standard error of the mean (SERT).

- (d) Ensure the line type is solid.

- (e) Set the minimum value of your y-axis to 630 and the maximum to 660.

# Now, using ggplot to create the plot

Disc.plot <- ggplot(data = filter(summary_df, Response=="Disc"), aes(x = Con, y = MeanRT, group = Response)) +

geom_line(aes(linetype = "Discriminating")) + # Add a linetype aesthetic

geom_errorbar(aes(ymin = MeanRT - SERT, ymax = MeanRT + SERT), width = 0.1) +

geom_point(size = 3) +

theme_gray() +

labs(

x = "Target Depth",

y = "RT (ms)",

color = "Experiment",

linetype = "Experiment") +

scale_linetype_manual(values = "dashed") + # Set the linetype for "Disc" to dashed

ylim(630, 660) # Set the y-axis limits

7. Similarly, create a line plot with error bars for the Localization task. Use a dashed line for this plot with the following exceptions:

- (a) Ensure the line type is dashed

- (b) Set the minimum value of your y-axis to 370 and the maximum to 410.

Loc.plot <- ggplot(data = filter(summary_df, Response=="Loc"), aes(x = Con, y = MeanRT, group = Response)) +

geom_line(aes(linetype = "Localizing")) + # Add a linetype aesthetic

geom_errorbar(aes(ymin = MeanRT - SERT, ymax = MeanRT + SERT), width = 0.1) +

geom_point(size = 3) +

theme_gray() +

labs(

x = "Target Depth",

y = "RT (ms)",

color = "Experiment",

linetype = "Experiment") +

scale_linetype_manual(values = "solid") + # Set the line type for "Disc" to dashed

ylim(370, 410) # Set the y-axis limits

8. Finally, use the grid.arrange() function from the “gridExtra” package to stack the plots for the Discrimination and Localization tasks on top of each other.

grid.arrange(Disc.plot, Loc.plot, ncol = 1) # Stack the plots on top of each other

Exercise 3: ANOVA Analysis

9. Using the “anova_test” function, conduct a two-way between-subjects ANOVA to investigate the effects of Con (Condition) and Response (Task type) on the target response times (TarRT). After running the ANOVA, use the “get_anova_table” function to present the results.

anova <- anova_test(

data = NearFarData, dv = TarRT, wid = ID,

between = c(Con, Response), detailed = TRUE, effect.size = "pes")

## Warning: The 'wid' column contains duplicate ids across between-subjects

## variables. Automatic unique id will be created

get_anova_table(anova)

## ANOVA Table (type III tests)

##

## Effect SSn SSd DFn DFd F p p<.05

## 1 (Intercept) 2.972143e+09 111582983 1 11150 296993.268 0.00e+00 *

## 2 Con 3.185839e+03 111582983 1 11150 0.318 5.73e-01

## 3 Response 1.762985e+08 111582983 1 11150 17616.741 0.00e+00 *

## 4 Con:Response 4.390483e+05 111582983 1 11150 43.872 3.67e-11 *

## pes

## 1 9.64e-01

## 2 2.86e-05

## 3 6.12e-01

## 4 4.00e-03

Exercise 4: Post Hoc Analysis

- Use “lm” function to fit a linear model to your data. Make sure to specify your dependent variable, independent variables, and interaction terms.

## Fitting a linear model to data

lm_model <- lm(TarRT ~ Con * Response, data = NearFarData)

- Use the “emmeans” function to get the estimated marginal means for your factors and their interaction. Then, use the pairs function to perform pairwise comparisons.

- (a) Set the adjust parameter in the test function to “Tukey” for Tukey’s honest significant difference test, to adjust for multiple comparisons to control the family-wise error rate.

# Get the estimated marginal means

emm <- emmeans(lm_model, specs = pairwise ~ Con * Response)

12. Print and review the results of your post hoc analysis. The output will provide a comparison of each pair of group levels, the estimated difference, the standard error, the t-value, and the adjusted p-value for each comparison.

# View the results

print(post_hoc_results)

## contrast estimate SE df t.ratio p.value

## Far Disc - Near Disc -11.5 2.70 11150 -4.250 0.0001

## Far Disc - Far Loc 238.9 2.67 11150 89.348 <.0001

## Far Disc - Near Loc 252.6 2.67 11150 94.581 <.0001

## Near Disc - Far Loc 250.4 2.69 11150 93.131 <.0001

## Near Disc - Near Loc 264.0 2.68 11150 98.341 <.0001

## Far Loc - Near Loc 13.6 2.66 11150 5.124 <.0001

##

## P value adjustment: tukey method for comparing a family of 4 estimates

Sevda Montakhaby Nodeh

EdCog Lab

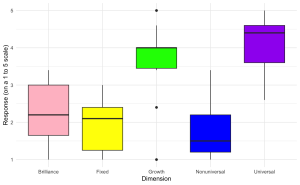

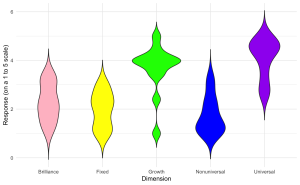

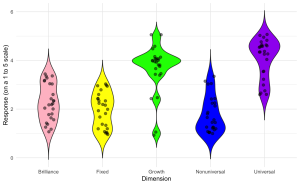

You are a researcher in the EdCog Lab at McMaster University. The Lab is conducting a study aimed at understanding the beliefs of instructors about student abilities in STEM (Science, Technology, Engineering, and Math) disciplines. This study is motivated by a growing body of literature suggesting that instructors’ beliefs about intelligence and success—categorized into brilliance belief (the idea that success requires innate talent), universality belief (the belief that success is achievable by everyone versus only a select few), and mindset beliefs (the view that intelligence and skills are either fixed or can change over time)—play a crucial role in educational practices and student outcomes. Understanding these beliefs is particularly important in STEM fields, where perceptions of innate talent versus learned skills can significantly influence teaching approaches and student engagement.

Experimental Design:

The survey was distributed through LimeSurvey to instructors across the Science, Health Sciences, and Engineering faculties. Participants were asked a series of Likert-scale questions (ranging from strongly disagree to strongly agree) aimed at assessing their beliefs in each of the three areas. Additional demographic and background questions were included to control for variables such as years of teaching experience, field of specialization, and level of education.

- Brilliance Belief: The belief that only those with raw, innate talent can achieve success in their field.

- Universality Belief: The belief that success is achievable for everyone, assuming the right effort and strategies are employed.

- Mindset Beliefs: Instructors’ views on the nature of intelligence and skills—whether they are fixed traits or can be developed over time.

The sample data file (“EdCogData.xlsx) for this exercise is structured as such:

- ID: A unique identifier for each respondent.

- Brilliance1 to Brilliance5: Responses to statements measuring the belief in brilliance as a requirement for success.

- A higher score in these columns indicates a belief that brilliance is a requirement for success.

- MindsetGrowth1 to MindsetGrowth5: Responses to questions aimed at assessing the belief in a growth mindset, suggesting that intelligence and abilities can develop over time.

- A higher score in these columns indicates a strong growth mindset.

- Nonuniversality1 to Nonuniversality5: Responses to statements measuring beliefs counter to universality, meaning that not everyone can succeed (i.e., success is not universal).

- A higher score in these columns indicates a non-universal mindset to success.

- Universality1 to Universality5: Responses to statements measuring the belief in universality, or the idea that success is achievable by anyone with sufficient effort.

- A higher score in these columns indicates a belief that with enough effort success is achievable (i.e., success is universal)

- MindsetFixed1 to MindsetFixed5: Responses to questions aimed at assessing the belief in a fixed mindset regarding intelligence and abilities. A fixed mindset believes that intelligence, talents, and abilities are fixed traits. They think these traits are innate and cannot be significantly developed or improved through effort or education.

- A higher score in these columns indicates a strong fixed mindset.

Getting Started: Loading Libraries, setting the working directory, and loading the dataset

Let’s begin by running the following code in RStudio to load the required libraries. Make sure to read through the comments embedded throughout the code to understand what each line of code is doing.

Note: Shaded boxes hold the R code, with the “#” sign indicating a comment that won’t execute in RStudio.

# Here we create a list called "my_packages" with all of our required libraries

my_packages <- c("tidyverse", "readxl", "xlsx", "dplyr", "ggplot2")

# Checking and extracting packages that are not already installed

not_installed <- my_packages[!(my_packages %in% installed.packages()[ , "Package"])]

# Install packages that are not already installed

if(length(not_installed)) install.packages(not_installed)

# Loading the required libraries

library(tidyverse) # for data manipulation

library(dplyr) # for data manipulation

library(readxl) # to read excel files

library(xlsx) # to create excel files

library(ggplot2) # for making plots

Make sure to have the required dataset (“EdCogData.xlsx“) for this exercise downloaded. Set the working directory of your current R session to the folder with the downloaded dataset. You may do this manually in R studio by clicking on the “Session” tab at the top of the screen, and then clicking on “Set Working Directory”.

If the downloaded dataset file and your R session are within the same file, you may choose the option of setting your working directory to the “source file location” (the location where your current R session is saved). If they are in different folders then click on “choose directory” option and browse for the location of the downloaded dataset.

You may also do this by running the following code:

Once you have set your working directory either manually or by code, in the Console below you will see the full directory of your folder as the output.

Read in the downloaded dataset as “edcogData” and complete the accompanying exercises to the best of your abilities.

# Read xlsx file

edcog = read_excel("EdCogData.xlsx")

Files to Download:

- EdCogData.xlsx

An interactive H5P element has been excluded from this version of the text. You can view it online here:

https://ecampusontario.pressbooks.pub/radpnb/?p=39#h5p-5

Answer Key

Exercise 1: Data Preparation and Exploration

Note: Shaded boxes hold the R code, while the white boxes display the code’s output, just as it appears in RStudio. The “#” sign indicates a comment that won’t execute in RStudio.

Load the dataset into RStudio and inspect its structure.

- How many rows and columns are in the dataset?

- What are the column names?

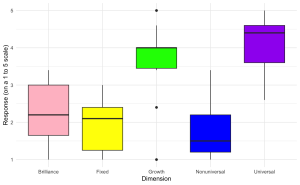

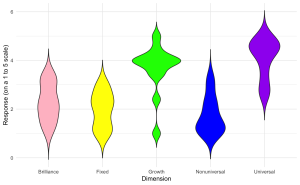

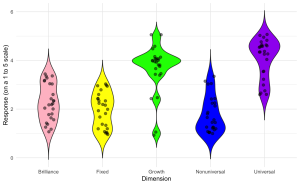

head(edcogData) # View the first few rows of the dataset